In reinforcement learning, the environment defines what the agent learns. This article outlines six essential design requirements that determine whether training leads to useful behavior or failure.

Designing RL Environments for Agent Training: 6 Requirements That Matter

Designing RL Environments for Agent Training: 6 Requirements That Matter

Environments, the hidden engine of RL

Reinforcement learning (RL) is often presented as “pick an algorithm, tune some hyperparameters, and train.” In practice, the environment is the real bottleneck: it is the part that decides what the agent can perceive, what it can do, what “success” means, and which experiences it will repeatedly learn from.

This represents a high risk in the current era of “AI agents.” But how, exactly? In enterprise-style workflows, long sequences with the wrong design can accumulate small per-step errors, creating almost a domino effect. Very quickly, a 1% error rate per step can compound to a 63% chance of failure by the hundredth step (Nuñez, 2025).

Environment design is tightly tied to how tasks are benchmarked and how rewards are defined. If a reward is hacked, leaked, contaminated, or saturated, the entire RL process would fail. This lies at the heart of our article: environment design does not only have a strong impact in reinforcement learning, but could make an entire process useless.

As many problems derived from this method arise, solutions also proliferate. For example, environment plasticity has recently become a popular topic among researchers, proposing “generative simulators” that co-generate tasks, world dynamics, and reward functions to keep environments plastic as models improve. (Patronus AI, 2025).

In short, an RL agent does not learn “the task the user meant.” It learns the task the environment actually defines, and is reinforcement learning’s core foundation.

Requirement 1#: Define the task before you define the reward

Environment design is the act of translating a real problem (“do customer support,” “drive safely,” “load soil into a hopper”) into a closed-loop interaction where each step has a clear input-output structure. These steps can be small sets of components, such as state and observation, action space, reward function, transition dynamics, and termination conditions, working as a whole as if they were the rules of a game.

Treating environments as a “contract” between algorithms and world dynamics is one quick way to simplify what needs to be done. Gym style APIs successfully reinforce this notion of a “contract”: they provide standardized interfaces for developing and testing reinforcement learning algorithms (Farama Foundation, n.d). In these gyms, reset initializes a task, step applies an action, and the environment returns the next observation plus a reward and termination signals.

However, one must be careful to confuse reward with success. A practical environment-design guide recommends evaluating across task-specific metrics instead of reward, because reward is itself a tunable design choice and a proxy for what you ultimately care about (Wolgast T, 2025). That single idea is powerful: it forces you to define “what counts” in business or scientific terms (latency, error rate, safety violations, cost, throughput, user satisfaction), then audit whether the reward is actually aligned with those metrics.

They replicate all sorts of static and dynamic challenges; from latency, interface changes, evolving information feeds, and random pop-ups, with the goal of exposing agents to the kinds of variability that often cause real systems to fail (Patronus AI, 2025).

In other words, define success outside the reward, treat the environment API as a contract, and ensure the task is representative of the real situations where you expect competence—not only the situations that are easy to score

Requirement 2#: Give the agent the right information to act

Environment design begins with what the agent can perceive, and it must be able to differentiate state vs observation: the true state is “how the environment is,” while the observation is what the agent receives.

In real systems this is often partial, noisy, or redundant; emphasizing why it should be clearly defined: the state should satisfy the Markov property (Wolgast T, 2025), meaning it should contain the information needed to make optimal and precise decisions, while the observation should focus on maximizing learning performance and keeping it transferable to real life cases.

A concrete example of “observation design as realism design” is autonomous-driving simulation. In the CARLA sensor reference, LiDAR is not just “a point cloud”: it has explicit configurable parameters (e.g., number of channels, points per second), as well as explicit models for point drop-off and distance noise (Carla Documentation, n.d.). In short, you can decide whether to match real sensor noise, exaggerate noise for robustness, or remove it for learnability; each choice changes what the agent learns.

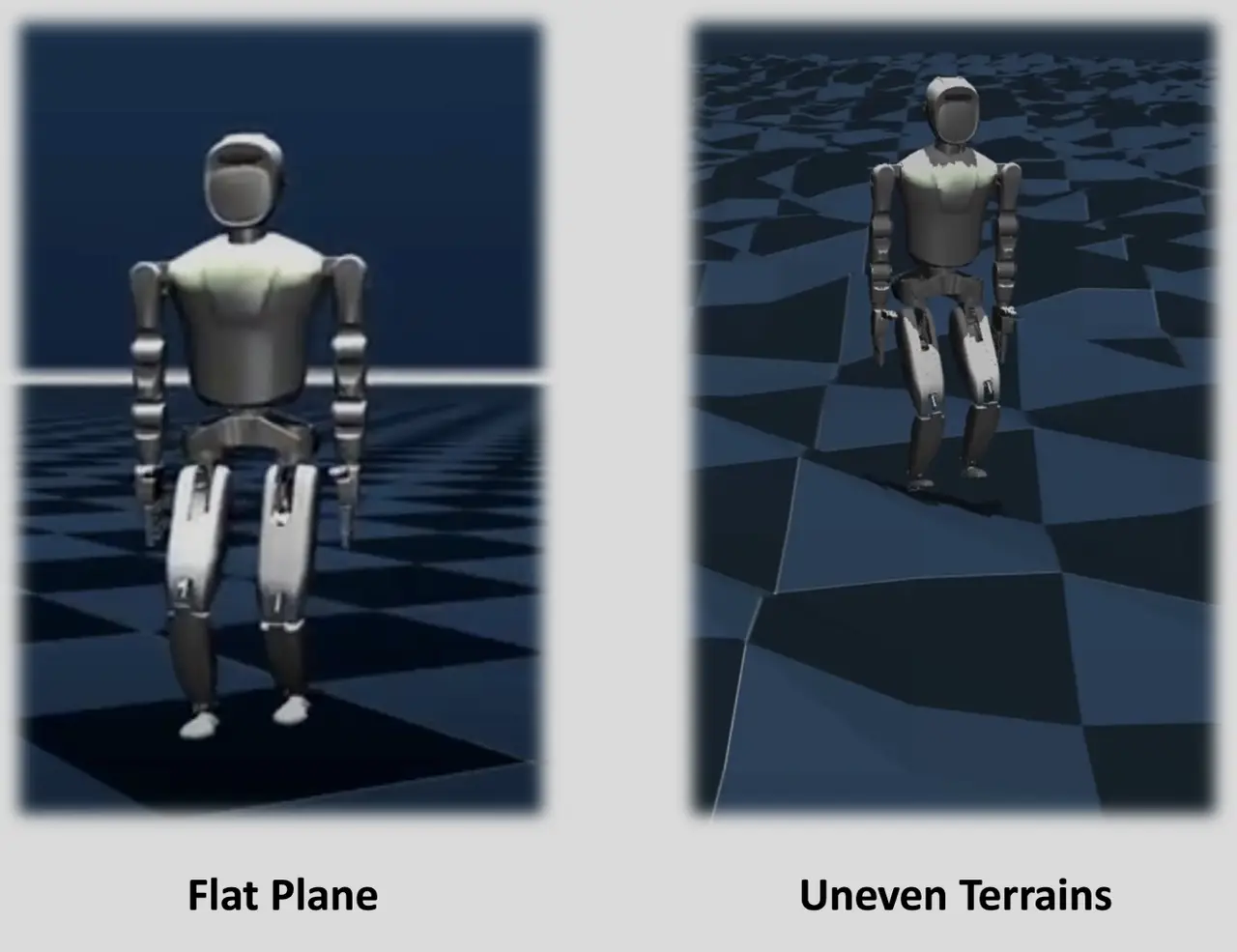

Robotics environments show the same idea in higher-dimensional form. In the paper 'Humanoid-Gym: Reinforcement Learning for Humanoid Robot with Zero-Shot Sim2Real Transfer' (Gu et al., 2024), it is mentioned that design choices such as frame stacking (15 frames for a single observation) report an explicit training setup using 8192 parallel environments, revealing that environment design is at its core, the sum of “what is observed” + “what scale of experience is possible.”

In short, observations are not “data you happen to have.” They are a theory of what matters in your task. Make that theory explicit, normalize it, so learning is stable, and add noise/partiality only when it improves transfer to the real deployment distribution.

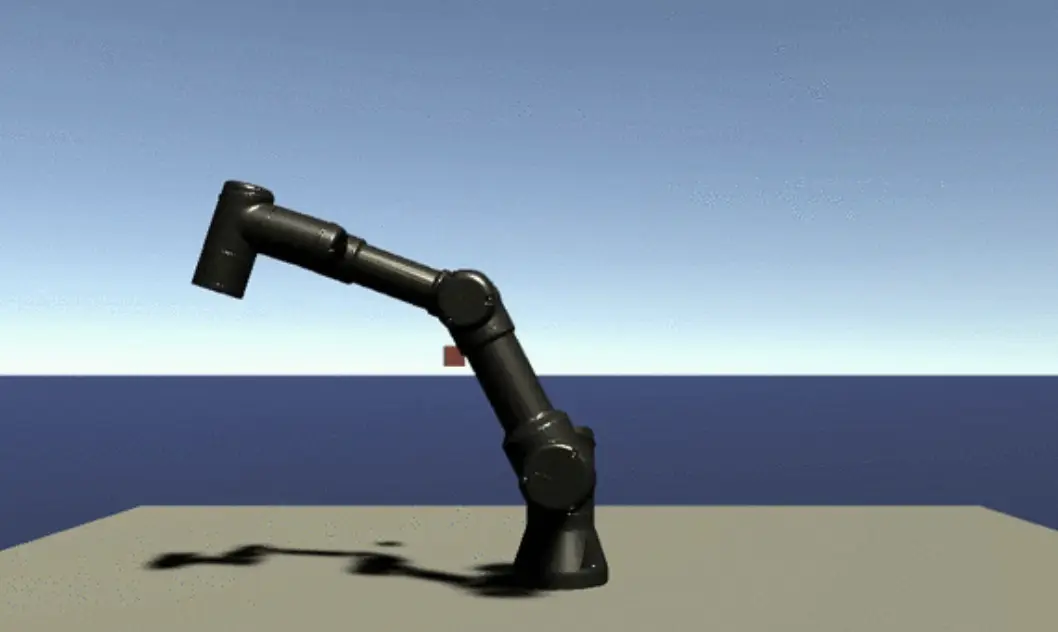

Requirement 3#: Make the actions meaningful and constrained

If observations are “what the agent can know,” the action space is “what the agent can control.” Poorly designed action spaces can sabotage exploration by introducing redundancy, ambiguity, or invalid behaviors, ultimately wasting data and slowing down learning.

To ensure objectives are met, actions should satisfy three key properties:

- Validity. The agent should not be able to perform impossible or meaningless actions, especially if they accidentally yield a reward. A practical guideline is to “ensure the agent cannot cheat,” by shaping actions so outputs correspond to meaningful actuator setpoints rather than arbitrary signals.

- Granularity. The most effective “control knobs” are not always the most obvious ones. In many domains, learning higher-level action representations can outperform direct low-level commands. This is why action-space design is fundamentally a domain-knowledge problem, not a trivial implementation detail.

- Safety constraints. In real-world tasks, the question is not only whether the agent can achieve reward, but whether it can do so safely around humans, hardware, and resource limits. Recent work incorporates safety layers or “shields” that enforce constraints while still allowing learning and tracking both performance and safety metrics (Thumm et al., 2024).

A concrete example comes from automated excavation systems, where the action space is defined by four control variables: upper carriage rotation, boom lift, dipper arm movement, and bucket position. This compact yet expressive interface allows the agent to operate within a physically meaningful control structure while interacting with a high-fidelity multibody simulator and sensor feedback (e.g., load mass, collisions) (Kurinov et al., 2020).

In short, the key difference between a “working” action space and a “trainable” one is not whether it is mathematically valid, but whether it makes correct behavior easy to express and incorrect behavior hard to stumble into.

Requirement 4#: Reward the outcome you actually care about

Rewards are the sharpest knife in RL, and the easiest way to get cut.

A core principle in environment design is that the maximum achievable return should correspond to the intended optimal behavior, not to a loophole. This means we should strive to be as precise as possible, avoid avoid encoding designer bias into the reward, and remember the agent optimizes the sum of rewards over time (return), not the immediate reward.

The classic failure mode is reward hacking. A well-known demonstration comes from training an agent on a boat racing game where hitting targets yields points but finishing the race is not directly rewarded. The learned policy discovers a strategy of circling an isolated lagoon to repeatedly farm targets; the authors report the agent’s score was, on average, 20% higher than human players, this despite failing at the human-understood goal of finishing quickly (Open AI, 2016). What to take from this? just a warning: optimization will exploit your proxy.

In short, rewards should measure the outcome you actually want, not an easy-to-measure proxy. Use denser feedback only when you can argue it does not change what “optimal” means, and assume capable agents will search for exploits unless you design against them.

Requirement 5#: Control the data your agent learns from

An RL agent creates its own dataset by interacting with the environment, which makes “data distribution” both more subtle and more controllable than in supervised learning. The paper "A Study on Overfitting in Deep Reinforcement Learning" (Zhang et al., 2018), mentions that a practical environment design should highlight one especially powerful design lever, so that the initial state distribution sampled at reset is fully under the designer’s control and can strongly influence final performance.

The second lever is generalization protocol. In RL, more often than not agents memorize policies that do well in the training environment and still fail on new but relevant conditions. Agents can overfit in “robust” ways: they can achieve optimal training reward yet show drastically different test performance, motivating more principled evaluation protocols.

One practical solution is to design environments like machine learning datasets: you need a separate set of test scenarios that the agent never sees during training, which measures whether it can truly handle new situations. The Procgen benchmark perfectly demonstrates this idea, as it provides 16 procedurally generated environments where agents are trained on some level layouts and tested on entirely new ones, forcing them to learn general skills rather than just memorizing specific paths (Cobbe et al., 2020).

Requirement 6#: Make training repeatable and debuggable

A common error in environment design is confusing “termination” with “truncation.” Truncation means an episode was cut for an external reason (e.g., time limits), while termination reflects true task completion. Treating truncated episodes as terminal can distort value estimation and harm training. Gymnasium highlights that older APIs used a single done flag, obscuring this distinction and leading to incorrect handling of time limits.

A second “quiet assumption” is stationarity. Many RL algorithms assume that transition dynamics and rewards remain fixed during training. In practice, however, real-world environments, especially product systems, change over time (UI, rules, user behavior). When the environment drifts, training becomes an attempt to learn a moving target. The issue is not that environments change, but that RL methods often assume they do not.

A third requirement is instrumentation and reproducibility. Huang et al. (2024) report over 25,000 tracked runs and 72,000 hours of experimentation, underscoring the need to log parameters and environment settings. Small changes in reward scaling or termination logic can create misleading performance gains. If results cannot be reproduced, improvements may reflect environment drift rather than better learning.

Finally, “scale” is not a luxury; it is often the difference between feasible and infeasible RL. Industry-facing environment build notes list “easily parallelable” as an explicit environment requirement, arguing that parallelization is among the most effective ways to speed up training and that practical environments should be compiled into lightweight executables to run many instances (MakinaRocks, 2022).

In short, if your episode boundaries are wrong, your learning signal becomes wrong; if your environment is non-stationary without acknowledging it, you train on a shifting problem; if you cannot reproduce runs reliably, you cannot know what improved.

Final remarks

As we have seen, the six requirements are deliberately simple in structure: task, observations, actions, rewards, data distribution, and episode-scale engineering. This simplicity reflects how environments are actually built in practice, as a set of interacting design decisions organized around a shared interface.

In short, better agents require better environments. RL environment design is ultimately the discipline of specifying what the agent experiences and what counts as success. The environment is not a passive container around learning; it is the mechanism that determines whether learning is possible, whether it aligns with your objective, and whether it transfers beyond the sandbox.

FAQ:

1. What is an RL environment in simple terms?

An RL environment is the system where an agent learns by interacting. It provides the agent with information about its current situation (the state), receives the actions the agent takes, and returns feedback in the form of rewards. Together, these elements form the learning loop that determines how the agent improves over time.

2. How do companies use RL environments for real products?

Companies use RL environments as controlled testing grounds where models can learn and be evaluated before being deployed in real-world systems. For instance, streaming platforms simulate user behavior to test recommendation strategies, robotics teams rely on simulators like CARLA to train autonomous driving agents safely, and e-commerce platforms experiment with pricing or ranking strategies in simulated environments before applying them live. These environments allow organizations to reduce risk while optimizing performance.

3. What happens if the environment is too simple or unrealistic?

When an environment is too simple or lacks realism, the agent tends to learn patterns that do not generalize beyond the training setup. A common example is in robotics, where an agent trained in a perfectly clean simulation, with no noise, delays, or uncertainty, may perform well during training but fail once deployed in the real world, where conditions are far less predictable. This mismatch is often referred to as the simulation-to-reality gap and is one of the main challenges in applied reinforcement learning.

4. Can RL environments be used for LLM agents or AI assistants?

Yes, RL environments are increasingly being adapted for LLM agents and AI assistants. In these cases, the environment may include access to tools such as search engines, APIs, or databases, and the agent is evaluated based on its ability to complete tasks accurately or efficiently. Rewards can be derived from task success, correctness, or even human feedback, while episodes may represent complete workflows, such as answering a complex query or executing a multi-step task. This reflects a broader shift in reinforcement learning toward more structured, real-world applications beyond traditional gaming scenarios.

Sources:

(PDF) Whitepaper: Environment Design for Reinforcement Learning: A Practical Guide and Overview https://www.researchgate.net/publication/388358936_Whitepaper_Environment_Design_for_Reinforcement_Learning_A_Practical_Guide_and_Overview

AI agents fail 63% of the time on complex tasks. Patronus AI says its new 'living' training worlds can fix that. | VentureBeat https://venturebeat.com/infrastructure/ai-agents-fail-63-of-the-time-on-complex-tasks-patronus-ai-says-its-new

Reinforcement Learning Environments | DigitalOcean

https://www.digitalocean.com/community/tutorials/reinforcement-learning-environments-rlvr

Sensors reference - CARLA Simulator https://carla.readthedocs.io/en/latest/ref_sensors/

Humanoid-Gym: Reinforcement Learning for Humanoid Robot with Zero-Shot Sim2Real Transfer https://arxiv.org/html/2404.05695v1

Env - Gymnasium Documentation https://gymnasium.farama.org/api/env

Human-Robot Gym: Benchmarking Reinforcement Learning in Human-Robot Collaboration https://arxiv.org/html/2310.06208v2

Kurinov et al., (2020). Automated excavator based on reinforcement learning and multibody system dynamics. https://doi.org/10.1109/ACCESS.2020.3040246

Faulty reward functions in the wild | OpenAI https://openai.com/index/faulty-reward-functions/

Specification gaming: the flip side of AI ingenuity, Google DeepMind

https://deepmind.google/blog/specification-gaming-the-flip-side-of-ai-ingenuity/

Policy invariance under reward transformations https://people.eecs.berkeley.edu/~pabbeel/cs287-fa09/readings/NgHaradaRussell-shaping-ICML1999.pdf

A Study on Overfitting in Deep Reinforcement Learning https://arxiv.org/abs/1804.06893

Leveraging Procedural Generation to Benchmark

https://proceedings.mlr.press/v119/cobbe20a/cobbe20a.pdf

Handling Time Limits

https://gymnasium.farama.org/tutorials/gymnasium_basics/handling_time_limits

Open RL Benchmark: Comprehensive Tracked Experiments for Reinforcement Learning

https://arxiv.org/html/2402.03046v1

Building a Reinforcement Learning Environment | MakinaRocks

https://www.makinarocks.ai/en/blog/building-a-reinforcement-learning-environment/

Related Topics

Why Agents Need Real RL Environments That Push Back

Agent Datasets: The Backbone of AI Assistant Training

A Guide to Synthetic Data Generation with Machine Learning

Your Smart Assistant Still Doesn’t Understand You

The Power of VideoCAD: Revolutionizing the Design Process

AI Training Data Services Explained: From Collection to Model Evaluation

Why Training Methods Matter More Than Model Size

How AI is Learning to Be More Honest with Itself

More about Abaka AI

Contact Us– Learn more about how world models and interactive systems are evaluated.

Explore Our Blog – Read research and articles on embodied AI datasets, multimodal alignment, simulation grounded data, and evaluation beyond appearance alone.

Follow Our Updates – Get insights from Abaka AI on real-world robotics research, agent evaluation workflows, and emerging standards for interactive AI systems.

Read Our FAQs – See how teams design datasets and evaluation frameworks for systems that must act, adapt, and remain consistent over time.

What's your data

bottleneck this quarter?

Missing data

We collect it.

Messy data

We label it.

No time

We have itOff-The-Shelf.

Pick the closest fit, we'll take the call from there.

What's your data

bottleneck this quarter?

Missing data

We collect it.

Messy data

We label it.

No time

We have it Off-The-Shelf.

Pick the closest fit, we'll take the call from there.