This article explores how GPT-5.4, OpenAI’s groundbreaking model, is shifting the AI landscape by moving beyond just answering questions or generating text. With its native computer-use capabilities, GPT-5.4 is now capable of interacting directly with software environments, allowing it to carry out complex tasks like navigating applications, clicking buttons, and typing commands. This shift could revolutionize how AI participates in everyday digital work, offering a glimpse into the future of digital coworking.

OpenAI’s GPT-5.4 Beats Humans at Desktop Tasks: What Changed?

OpenAI’s GPT-5.4 Beats Humans at Desktop Tasks: What Changed?

The thought of a digital colleague sounds intriguing. The colleague wouldn't be sensitive to advice, wouldn't join any office gossip, and likely wouldn't get your promotion... until you imagine that it might be clearly better than you at the only job you know how to do, and your boss starts making some mildly unsettling remarks about how far away in the future they could utilize more digital employees.

As nice as it would be if this were highly unlikely or a burden for the next generation, we are excited to announce that, for the first time, an AI model has surpassed humans at operating a computer.

OpenAI’s newest model, GPT-5.4, achieved a 75% success rate on OSWorld-Verified, a benchmark designed to test whether AI systems can complete real desktop tasks such as navigating applications, filling forms, and interacting with software interfaces. Human experts scored 72.4% on the same benchmark, showing that the model now performs slightly better than people at these tasks (OpenAI, 2026).

This milestone clearly signals an important shift in artificial intelligence. Right now, we are not simply experiencing progress; this is a full leap forward to another stage. AI systems are moving beyond answering questions and generating text toward actually performing work on computers.

But how did GPT-5.4 make this leap?

Several technical improvements — including native computer use, stronger reasoning, improved vision capabilities, and better tool integration — have pushed AI systems closer to functioning as autonomous digital workers rather than helpful chatboxes.

From Chatbot to Digital Coworker

The main premise and promise of artificial intelligence for a long time has been to automate tasks and accelerate our speed of work. Earlier, large language models primarily acted as conversational assistants. They could generate text, summarize documents, or write code, but humans still had to carry out the steps on their computers.

GPT-5.4 changes this exact paradigm.

According to OpenAI, the model is the company’s first general-purpose model with native computer-use capabilities, allowing it to interact directly with software environments (OpenAI, 2026). Instead of simply describing how to complete a task, the system can execute actions such as clicking buttons, navigating interfaces, typing commands, and interacting with applications.

This makes a fundamental change to the user experience.

Rather than prompting a chatbot for instructions, users can effectively delegate tasks to an AI agent operating within their software environment. As one analysis describes it, the interaction begins to feel less like using a tool and more like working alongside a digital coworker capable of carrying out workflows on its own (CodeAI, 2026).

If this capability proves reliable in real-world settings, it could mark a major transition in how AI systems participate in everyday digital work.

A Major Leap in Desktop Task Performance

One of the clearest indicators of GPT-5.4’s improvement is its performance on computer-use benchmarks.

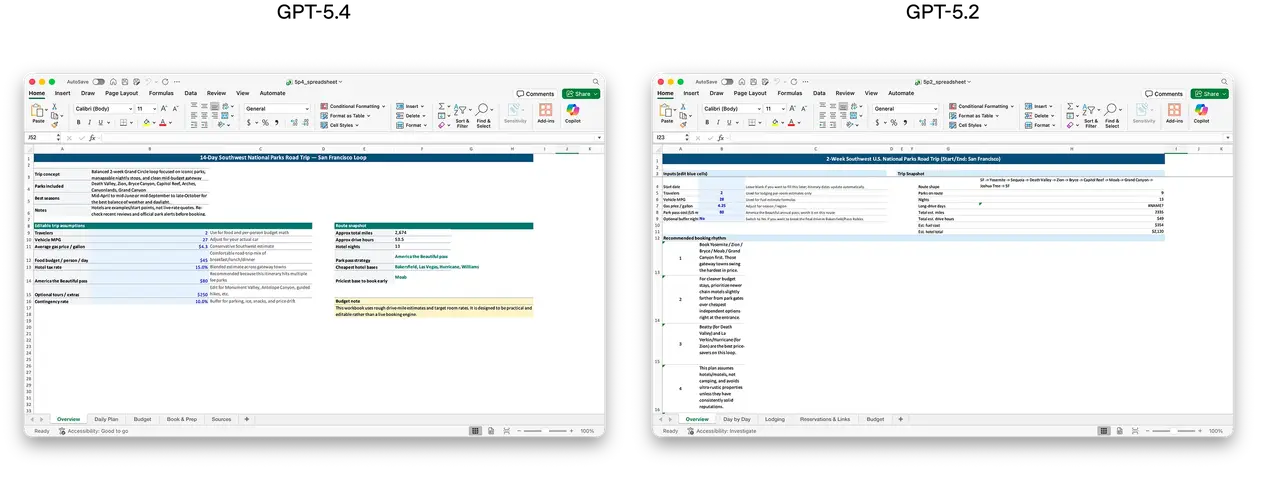

On OSWorld-Verified, which measures a model’s ability to operate desktop software using screenshots and keyboard or mouse actions, GPT-5.4 achieved a 75.0% success rate (OpenAI, 2026). Human experts scored approximately 72.4% on the same benchmark, while the previous GPT-5.2 model achieved only 47.3%.

This represents a dramatic improvement in the model’s ability to interpret visual interfaces and execute sequences of actions.

Benchmarks like OSWorld test realistic tasks such as:

- navigating operating systems

- opening and configuring applications

- interacting with menus and UI elements

- completing multi-step workflows

Nevertheless, it's common knowledge that benchmarks never perfectly capture real-world complexity. Yet, the results suggest that AI systems are rapidly improving at tasks that require direct interaction with software environments.

Why Computer Use Matters for AI

The significance of GPT-5.4’s capabilities goes beyond the convenience of having AI open applications or navigating menus.

The real breakthrough is that the model can function within the messy environments where human digital work actually happens. Traditional automation tools depend on APIs or rigid scripts, but many real-world workflows involve inconsistent interfaces, permissions systems, and visual elements that change over time.

AI systems that can reliably interpret these environments could dramatically expand the range of tasks that can be automated.

As analysts have noted, once an AI system can navigate the same visual environments humans use—spreadsheets, dashboards, enterprise software, and web portals—the number of tasks it can perform grows significantly (CodeAI, 2026).

In other words, the future of AI may be determined less by how well a system writes text and more by how effectively it can execute workflows across real software tools.

Longer Context Enables Complex Workflows

Another major improvement in GPT-5.4 is its expanded reasoning capacity.

The model supports up to one million tokens of context, allowing it to maintain awareness across long conversations and complex tasks (OpenAI, 2026). This expanded memory helps the system plan and execute multi-step workflows without losing track of earlier instructions or documents.

Longer context windows are particularly important for AI agents that operate continuously. Previous agent systems often struggled with long tasks because they forgot earlier steps or lost important information during execution. How annoying was that!

With a much larger working memory, GPT-5.4 can potentially manage longer processes such as:

- analyzing large datasets

- navigating multiple applications

- completing research workflows

- executing multi-stage automation tasks

For AI agents designed to operate autonomously, maintaining state across extended workflows is non-negotiable.

Smarter Tool Use and Lower Cost

Another great addition is the way GPT-5.4 interacts with external tools.

Instead of loading every tool definition into the prompt, the model can now use tool search, retrieving only the tools needed for a specific task (OpenAI, 2026). This approach reduces token usage while allowing agents to operate within larger tool ecosystems.

It's important to note that cost efficiency is a crucial factor for real-world AI deployment. Agent systems that run continuously have to balance performance with operational cost, in order to be feasible.

According to OpenAI, GPT-5.4 is significantly more token efficient than earlier models, allowing it to solve tasks with fewer tokens and faster response times (OpenAI, 2026).

These efficiency gains could make long-running AI agents economically viable for enterprise use.

Stronger Performance Across Digital Work

Beyond desktop navigation, GPT-5.4 was designed to improve performance across a range of professional tasks.

The model already incorporates advances in reasoning, coding, and agent workflows. This enables it to complete complex tasks across documents, spreadsheets, presentations, and other productivity tools (OpenAI, 2026).

Developers can also control the model’s behavior using structured prompts and safety policies, allowing organizations to adjust how the AI operates depending on the level of risk tolerance.

Clearly, GPT-5.4 is designed not just as a conversational AI but as a platform for building autonomous digital agents capable of operating across software environments.

Are AI Workers Actually Here?

While GPT-5.4’s benchmark performance is impressive, real-world adoption will ultimately determine how transformative these capabilities are.

Desktop environments are unpredictable. Interfaces change, permissions vary, and workflows often involve edge cases that benchmarks do not capture.

However, the trajectory we're in is clear. As one analysis noted, if these capabilities hold up outside controlled tests, we may be seeing the emergence of a new category of product: an AI system that can operate software as effectively as the average user in real environments (CodeAI, 2026).

That would represent a revolution in how automation works.

Build Reliable AI Systems with High-Quality Training Data

With AI models becoming capable of performing complex digital tasks, the importance of high-quality training data and robust evaluation pipelines continues to grow. Even the most advanced models rely on structured datasets, precise annotations, and carefully designed benchmarks to perform reliably in real-world environments.

Abaka helps organizations build production-ready AI systems by providing scalable AI training data services, including data collection, annotation, quality assurance, and model evaluation. Whether you're developing computer vision systems, language models, or AI agents that interact with software environments, Abaka AIensures your datasets are accurate, diverse, and aligned with real-world use cases.

If you're building AI products that require reliable data pipelines, check out Abaka AI to scale your AI training data workflows efficiently and responsibly.

Sources

OpenAI. (2026). Introducing GPT-5.4.

https://openai.com/index/introducing-gpt-5-4/

CodeAI. (2026). The AI employee just got real: GPT-5.4 could change computer work forever.

https://medium.com/@codeai/the-ai-employee-just-got-real-gpt-5-4-why-could-change-computer-work-forever-4021714b8532

FAQ

What is GPT-5.4 and how is it different from previous models?

GPT-5.4 is OpenAI’s latest large language model designed to perform complex tasks beyond text generation. Unlike earlier models that primarily responded to prompts, GPT-5.4 can interact with computer interfaces, use external tools, and execute multi-step workflows. These capabilities allow it to complete real desktop tasks such as navigating software applications or filling out forms.

What benchmark did GPT-5.4 beat humans on?

GPT-5.4 surpassed human performance on the OSWorld-Verified benchmark, which measures how effectively an AI system can complete real desktop tasks using screenshots, mouse actions, and keyboard inputs. The model achieved a 75% success rate, slightly outperforming human experts who scored around 72.4%.

Can AI now replace humans in desktop tasks?

Not entirely. While GPT-5.4 performs strongly on structured benchmarks, real-world workflows still require human oversight, judgment, and error handling. However, AI agents are increasingly capable of assisting with repetitive digital tasks, automating parts of workflows, and supporting knowledge workers across many industries.

Why is training data important for AI agents?

AI models learn patterns from the data they are trained on. High-quality training data helps models understand user intent, interpret interfaces correctly, and generate reliable outputs. Poor or biased datasets can lead to inaccurate predictions and unreliable AI behavior.

What role do data labeling and evaluation play in AI performance?

Data labeling provides the ground truth that machine learning models learn from. Evaluation benchmarks then measure whether models perform tasks correctly in realistic scenarios. Together, these processes help ensure that AI systems behave accurately, consistently, and safely in production environments.

Further Readings:

👉 AI Can Now Use Your Computer: Why FDM-1 Signals the Next Agent Breakthrough

👉 The AI Agent Evaluation Crisis: Bridging the 37% Production Gap

👉 GPT-5 vs. Gemini 3 Pro: Specialized Science Verdict from SuperGPQA

👉 EditReward: Outperforming GPT-5 in AI Image Editing Alignment

What's your data

bottleneck this quarter?

Missing data

We collect it.

Messy data

We label it.

No time

We have itOff-The-Shelf.

Pick the closest fit, we'll take the call from there.

What's your data

bottleneck this quarter?

Missing data

We collect it.

Messy data

We label it.

No time

We have it Off-The-Shelf.

Pick the closest fit, we'll take the call from there.