Standard Intelligence's FDM-1 is the first universal computer action model, trained on 11 million hours of video to move AI from simple chatbots to autonomous digital workers. By achieving 50x greater token efficiency and 11ms latency, it enables high-speed execution of complex tasks like CAD modeling and real-world driving.

AI Can Now Use Your Computer: Why FDM-1 Signals the Next Agent Breakthrough

AI Can Now Use Your Computer: Why FDM-1 Signals the Next Agent Breakthrough

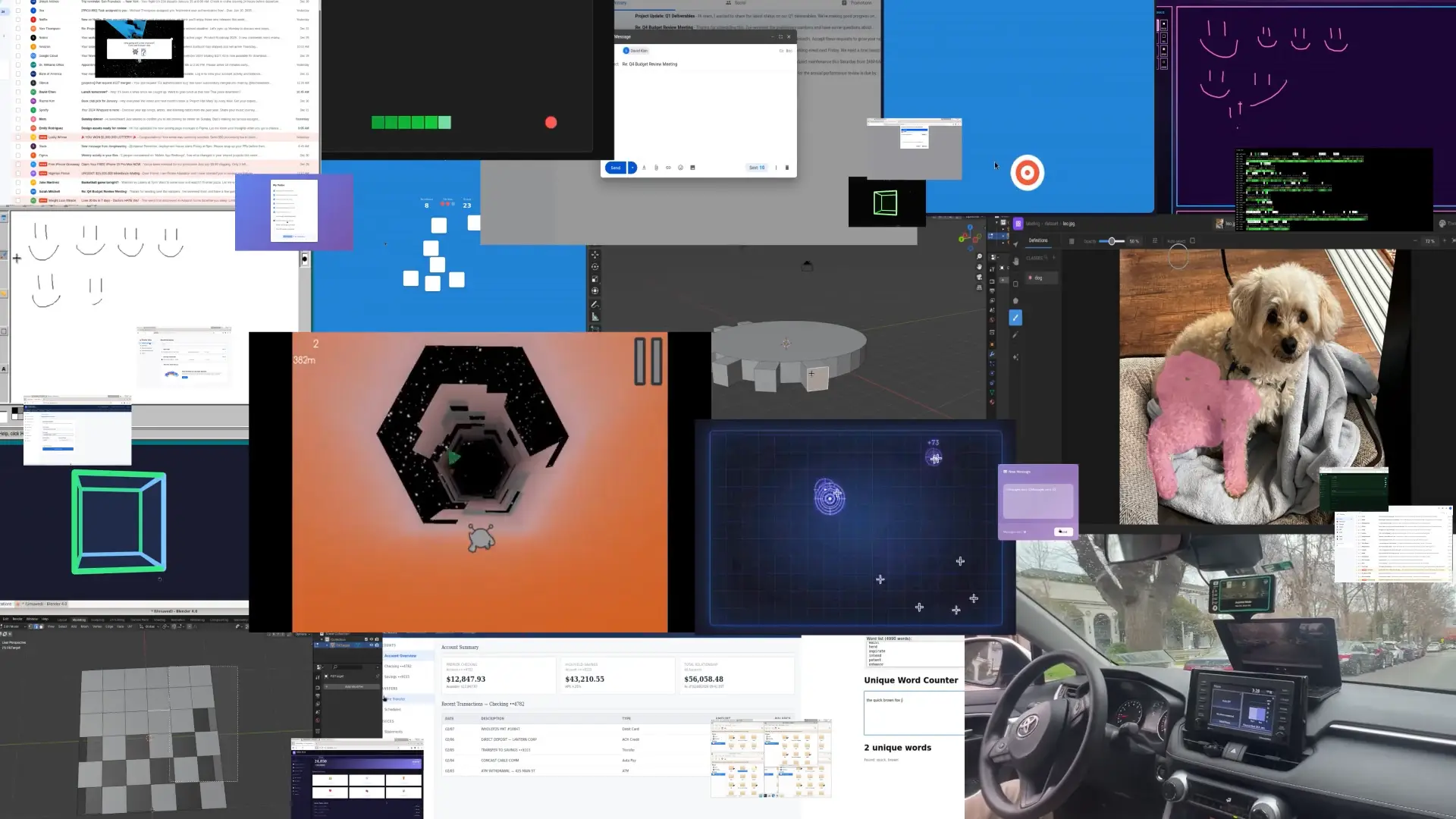

FDM-1, released by Standard Intelligence, is a foundation model designed for computer use and one of the first large-scale systems trained to model computer interaction directly from video. While the artificial intelligence industry has spent the last few years mastering text, images, and basic reasoning, a critical gap has remained: actual execution. AI systems could write code or draft emails, but operating software interfaces across long workflows has remained difficult, with most approaches relying on screenshot-based agents or task-specific training environments. FDM-1 introduces a different approach by learning patterns of human computer interaction directly from screen-recording videos, enabling the model to predict actions such as mouse movements and key presses from visual context.

According to the Standard Intelligence technical release, the system is trained on large-scale datasets of recorded computer activity and uses an inverse dynamics model to infer action labels from video. This approach allows computer-interaction data to scale far beyond manually annotated demonstrations, suggesting a path toward training more general computer-use models using large volumes of behavioral data.

Overcoming the Data Bottleneck

Historically, building computer-use agents required fine-tuning Vision-Language Models (VLMs) on manually annotated screenshots of software interfaces. These datasets were often expensive to produce and limited in scale; as noted by the Standard Intelligence team, many available labeled datasets contain only tens of hours of high-quality recordings of human computer interaction.

FDM-1 introduces a different scaling strategy. According to the FDM-1 technical release, the model was trained using a dataset containing approximately 11 million hours of screen-recording video. To achieve this, the team trained an Inverse Dynamics Model (IDM), a concept supported by foundational research such as OpenAI’s Video PreTraining (VPT) paper, on around 40,000 hours of human-annotated recordings. Once trained, the IDM was used to infer actions such as mouse movements and key presses across a much larger corpus of recorded computer activity.

This approach combines high-quality human annotations with automated labeling, enabling computer-interaction datasets to scale far beyond what manual labeling alone could achieve while still relying on curated human demonstrations to ground the learning process.

Unprecedented Token Efficiency and Latency

One of the biggest hurdles in processing video for AI is context length. Standard Intelligence notes that traditional VLMs can burn around a million tokens just to process a single minute of 30 FPS computer video. FDM-1's architecture is designed to address this limitation by enabling far longer video contexts.

According to the technical specifications provided by Standard Intelligence, the model offers several notable improvements:

- Massive Compression: The video encoder can compress almost 2 hours of 30 FPS video into just 1M tokens.

- Industry-Leading Efficiency: This makes FDM-1 50x more token-efficient than previous state-of-the-art models and 100x more token-efficient than OpenAI's vision encoder.

- Hyper-Fast Training: Compared to a baseline Vision Transformer (ViT), the FDM-1 encoder achieves ~100x faster convergence during training.

- Ultra-Low Latency: ~11 ms round-trip screen capture-to-action latency enabled by GPU-VM colocation, low-latency VNC tuning, sequence-length packing, and custom Rust input bindings.

Real-World Capabilities: From CAD to Cars

Because FDM-1 can process long-horizon contexts (up to 20 minutes in a 200k token window, as per Standard Intelligence's context table, it can execute complex workflows that were previously impossible for AI. It trains and infers directly on video and action tokens, bypassing the latency of traditional chain-of-thought reasoning.

The Standard Intelligence report highlights several startling, real-world proficiencies:

- Advanced 3D Modeling: FDM-1 performs continuous mouse actions in Blender, completing CAD tasks such as extruding faces on an n-gon.

- Automated UI "Fuzzing": The model explores GUI state trees to discover bugs in a mock banking application, including a flaw allowing repeated wire transfers to produce a negative balance

- Autonomous Driving: After fine-tuning on less than one hour of driving data, FDM-1 uses arrow-key inputs through a web interface to navigate turns around a block in San Francisco. The model begins with 50% key-press prediction accuracy, significantly higher than a baseline model trained without internet video pretraining.

Why FDM-1 is the Next Agent Breakthrough

The release of FDM-1 highlights an important shift in artificial intelligence: models are beginning to move beyond passive understanding toward directly operating computer interfaces. By demonstrating that models can learn computer actions from large-scale screen-recording video collected across the internet, Standard Intelligence shows a new path for training general computer-use systems.

Rather than relying solely on manually annotated screenshots, FDM-1 learns from millions of hours of human computer interaction, enabling it to perform tasks ranging from CAD modeling to UI testing and simulated driving. These demonstrations suggest that long-horizon, video-native models could become a foundation for future AI systems capable of interacting with software environments more autonomously.

Further Reading

Explore more insights from the Abaka AI Blog on how we are building the data foundations for this new era of autonomous agents:

- Abaka AI's VeriGUI: Building Trustworthy Agent Data – Discover how our VeriGUI project uses an adversarial QA process to produce the trustworthy data necessary for autonomous agents.

- Why Agents Need Real RL Environments That Push Back – Why scalable, time-aware, and "reactive" Reinforcement Learning environments are the critical next step for models operating in the real world.

- Breaking the Code Intelligence Barrier: How SWE-Bench Pro and Abaka AI Enable Real-World Coding Intelligence – How high-fidelity engineering datasets enable models to operate within complex software development workflows.