AI is shifting from chatbots that generate responses to agents that execute tasks. Recent innovations from companies like Anthropic, Cursor, and Perplexity demonstrate how modern AI systems can plan, act, and operate software environments autonomously. These agent-based systems combine reasoning, tool usage, and memory to perform complex workflows—effectively transforming large language models into self-running computers capable of acting as digital operators. In short: chatbots answer questions, but AI agents complete work.

From Chatbots to Operators: The Rise of AI Agents, FDM-1, and the OpenClaw Ecosystem

From Chatbots to Operators: Why AI Agents and OpenClaw Systems Are Becoming Self-Running Computers

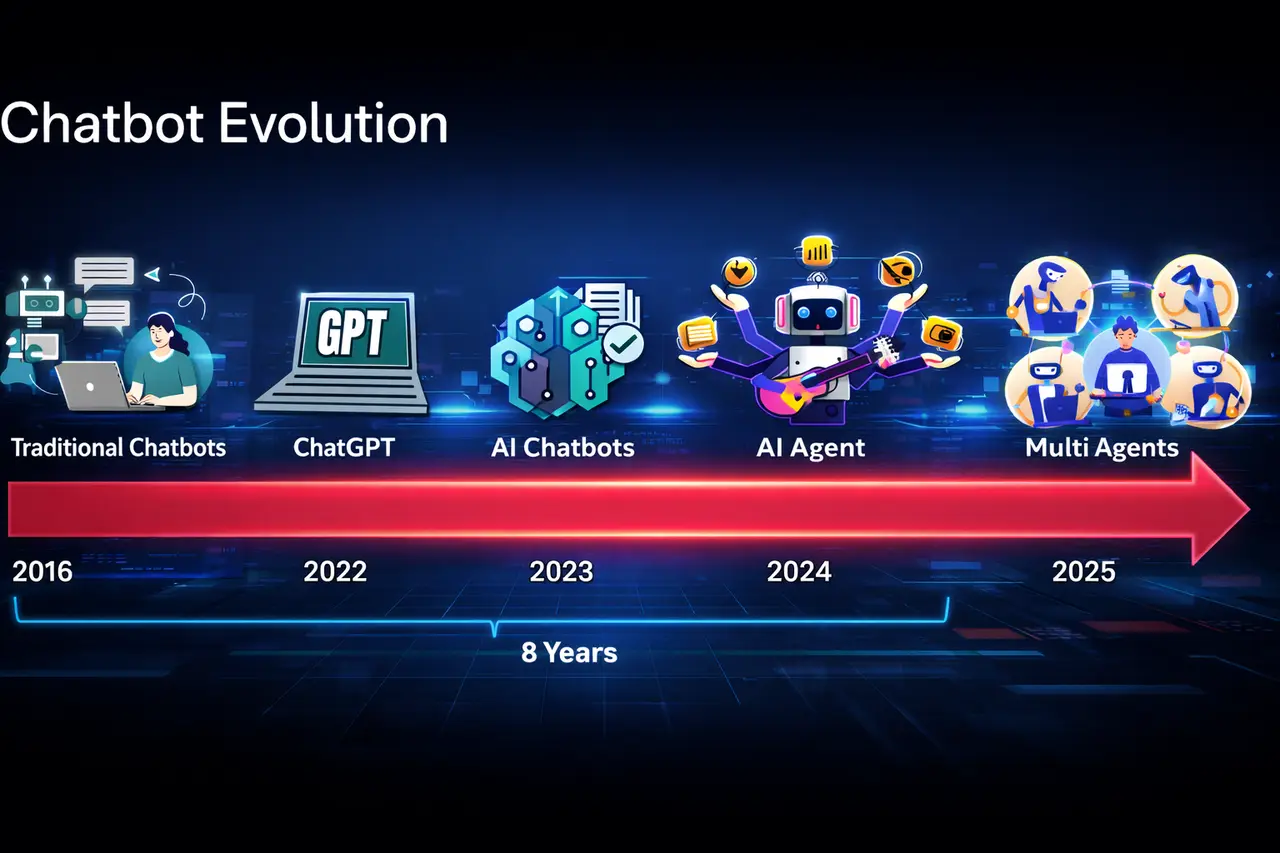

For years, AI assistants functioned primarily as chatbots~ systems that generated text responses when prompted. But a new paradigm is emerging: AI agents that can operate computers autonomously.

Recent developments from companies like Anthropic, Cursor, and Perplexity signal a rapid transition from conversational AI to operational AI:

- Anthropic’s Claude is evolving toward autonomous agent workflows.

- Cursor Cloud Agents allow AI to run development tasks asynchronously.

- Perplexity Computer introduces AI systems that can directly operate software.

In short, AI is moving from answering questions to executing tasks. The next generation of systems are becoming self-running computers capable of reasoning, planning, and acting within digital environments.

The Evolution: From Chatbots to Autonomous Operators

- User asks a question

- AI generates a response

- Interaction ends

AI agents introduce a continuous reasoning loop:

- Perceive: Observe environment or task

- Reason: Plan steps to accomplish goal

- Act: Use tools, APIs, or software

- Evaluate: Adjust plan based on results

This structure is often called the agentic loop.

According to a 2024 McKinsey AI report, organizations implementing agent-based automation can reduce complex workflow time by 30-60% compared to traditional automation tools.

Chatbots generate responses.

Agents execute workflows.

The key difference is not intelligence, but agency and autonomy.

What Makes an AI Agent a “Self-Running Computer”?

Three capabilities enable this shift:

1. Tool Use

Modern LLMs can call tools such as:

- Web browsers

- Code interpreters

- APIs

- Databases

OpenAI’s function-calling architecture and similar frameworks allow models to interface with software like human operators.

Tool-augmented reasoning significantly improves success rates on complex tasks in multiple AI benchmarks.

2. Planning and Task Decomposition

Agent systems break problems into sub-tasks.

Example workflow:

Goal: Deploy a website.

Agent steps:

- Generate code

- Run tests

- Fix errors

- Deploy infrastructure

- Monitor logs

Cursor’s new Cloud Agents automate large portions of software development this way.

Developers report productivity improvements of 2-4× when AI agents assist in coding workflows.

3. Persistent Memory

Chatbots forget conversations after the session ends.

Agents maintain state and memory across tasks.

This allows systems to:

- track long-term goals

- remember prior outputs

- update strategies

Research from DeepMind shows memory-augmented agents achieve up to 3× higher performance on long-horizon planning benchmarks.

Real-World Systems Leading the Agent Revolution

Claude Agents (Anthropic)

Claude’s new capabilities emphasize computer-use autonomy, where the model can interact with software interfaces directly.

These systems can:

- navigate dashboards

- run terminal commands

- execute workflows

Industry analysts describe this shift as “AI operators” replacing manual digital labor.

Cursor Cloud Agents

Cursor’s platform allows developers to delegate tasks such as:

- debugging repositories

- writing tests

- refactoring codebases

Instead of responding interactively, agents run tasks asynchronously in the cloud.

This moves AI from assistant to collaborator.

Perplexity Computer

Perplexity’s system aims to build an AI-native operating environment.

Instead of opening apps manually, users describe goals:

“Research competitors and generate a presentation.”

The agent then:

- Collects data

- Writes content

- Creates slides

In short: the AI becomes the operating system.

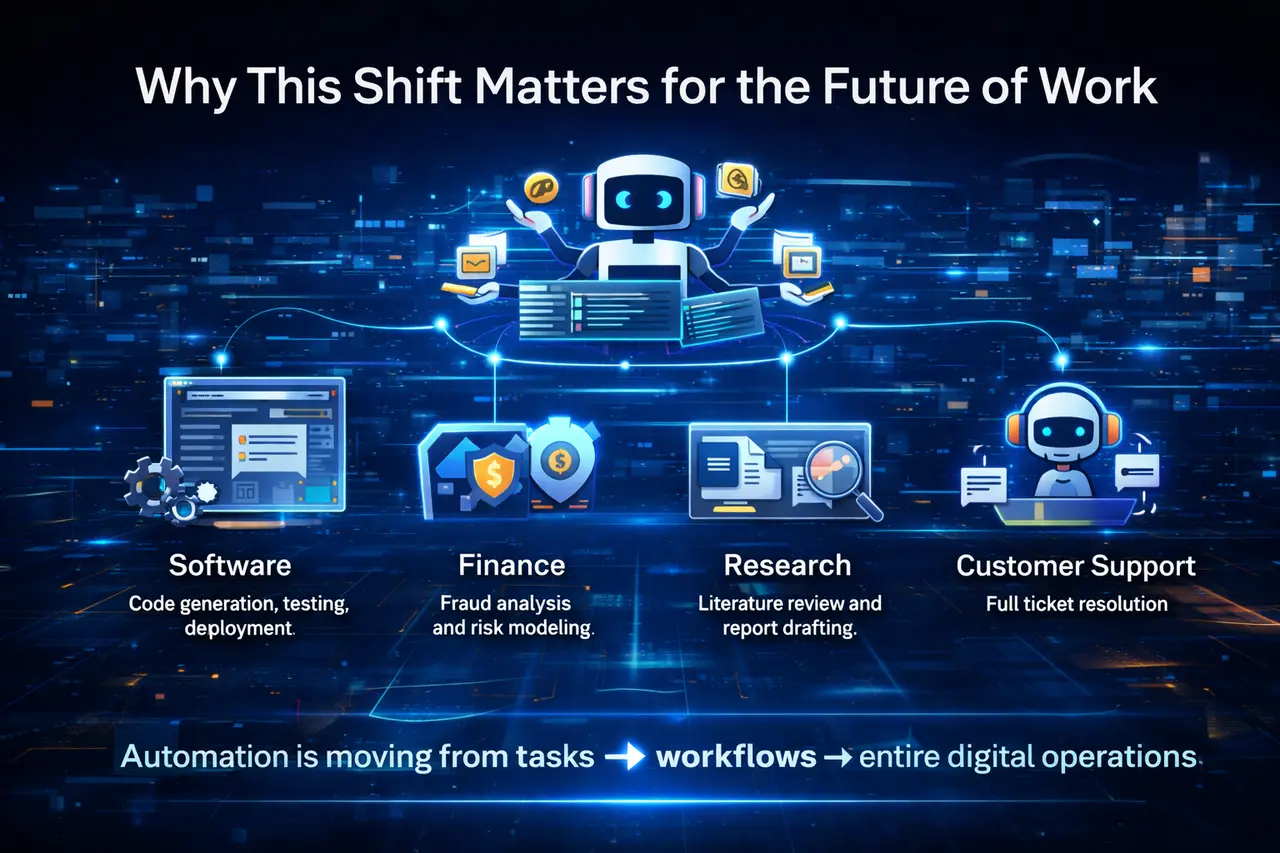

Why This Shift Matters for the Future of Work

A 2024 Goldman Sachs report estimates that generative AI could automate up to 25% of current work tasks globally.

But agent-based systems push this further by automating multi-step workflows.

Examples include:

Industry | Agent Workflow |

Software | Code generation, testing, deployment |

Finance | Fraud analysis and risk modeling |

Research | Literature review and report drafting |

Customer support | Full ticket resolution |

Summary:

Automation is moving from tasks → workflows → entire digital operations.

The Role of Human Feedback in Training AI Operators

Even autonomous agents require human-in-the-loop training.

Techniques like Reinforcement Learning from Human Feedback (RLHF) remain critical.

Human evaluators help models learn:

- task correctness

- safety boundaries

- decision reasoning

Companies building advanced agents rely heavily on human evaluation datasets and benchmarking pipelines.

Without structured evaluation, agents risk:

- hallucinated actions

- unsafe operations

- unreliable automation

Key Challenges Facing Autonomous AI Agents

Despite rapid progress, autonomous AI agents still face several technical and operational limitations. The table below summarizes the most significant challenges.

Challenge | Description | Examples / Evidence |

Reliability | Autonomous agents often struggle with complex, long-horizon tasks that require many sequential steps. Small reasoning errors can compound over time and cause task failure. | Research from Epoch AI shows many agents struggle with tasks requiring more than 20 sequential steps. |

Safety | Agents with access to systems, files, or APIs can introduce security risks if safeguards are not in place. | Potential issues include unintended file deletion, executing malicious code, and leaking sensitive data. This is why AI red-teaming and adversarial testingare increasingly important. |

Evaluation | Measuring agent performance is more difficult than evaluating traditional chatbots because success depends on completing multi-step workflows. | Evaluation must consider task completion, efficiency, and reliability across environments. New benchmarks such as agentic task environments are emerging to address this. |

What Comes Next: AI as the Operating Layer of the Internet

The future trajectory is clear:

AI will increasingly act as the interface between humans and software.

Instead of navigating apps ourselves, we will describe goals:

“Analyze our sales data and prepare a report.”

The agent will coordinate tools, data, and workflows automatically.

In short:

Chatbots answered questions.

Agents will run computers.

Related Articles

To continue exploring similar topics:

- EditReward: Outperforming GPT-5 in AI Image Editing Alignment

- Why Agents Need Real RL Environments That Push Back

- Human-in-the-Loop Examples: 5 Real AI Workflows That Still Need Humans in 2026

FAQ: AI Agents and Autonomous Systems

What is an AI agent?

An AI agent is a system that can perceive an environment, plan actions, and execute tasks using tools or software to achieve a goal.

How are AI agents different from chatbots?

Chatbots generate responses to prompts.

AI agents execute workflows, interacting with tools, APIs, and digital environments.

What are OpenClaw or computer-use systems?

Computer-use systems allow AI to operate software interfaces, including browsers, terminals, and applications.

They essentially allow AI models to act like human computer operators.

Are AI agents fully autonomous today?

Not yet.

Most systems still require human oversight, monitoring, and evaluation to ensure reliability and safety.

Why is human feedback still necessary?

Human evaluators help train models to:

- avoid unsafe actions

- reason correctly

- follow task objectives

This feedback is essential for reliable autonomous AI systems.

Sources

- Stanford Human-Centered AI Institute - AI Index Report 2024 & 2025

- The AI Agent Index (MIT, Stanford, Harvard researchers)

- SMART: Self-Aware Agent for Tool Overuse Mitigation (2025)

- AI Agents with Human-Like Collaborative Tools (2025)

- DeepPlanning: Benchmarking Long-Horizon Agentic Planning (2026)

- Long-Horizon Agent Planning Overview (2026)

- MEM1: Learning to Synergize Memory and Reasoning for Long-Horizon Agents (2025)

- AMA-Bench: Evaluating Long-Horizon Memory for Agentic Applications (2026)

- DeepMind - Agents That Imagine and Plan

Interested in building or evaluating AI agents and next-generation LLM systems? 📩Contact our team to learn how we support leading AI companies with human evaluation, red teaming, and high-quality training data.

What's your data

bottleneck this quarter?

Missing data

We collect it.

Messy data

We label it.

No time

We have itOff-The-Shelf.

Pick the closest fit, we'll take the call from there.

What's your data

bottleneck this quarter?

Missing data

We collect it.

Messy data

We label it.

No time

We have it Off-The-Shelf.

Pick the closest fit, we'll take the call from there.