In 2026, AI systems may operate at scale, but they do not operate alone. From LLM red teaming and medical diagnostics to fraud detection and autonomous vehicles, high-stakes AI workflows still depend on structured human oversight to ensure accuracy, safety, compliance, and accountability. The most successful AI deployments are not fully autonomous, they are strategically human-guided.

Human-in-the-Loop Examples: 5 Real AI Workflows That Still Need Humans in 2026

Human-in-the-Loop Examples: 5 Real AI Workflows That Still Need Humans in 2026

Artificial intelligence has automated everything from customer support to code generation. Yet in 2026, the most reliable AI systems still depend on human-in-the-loop (HITL) workflows.

According to a 2024 report by McKinsey & Company, over 55% of enterprises deploying AI at scale maintain structured human review layers to mitigate risk and improve accuracy. Similarly, research from Stanford HAI shows that human oversight improves factual accuracy in high-stakes domains by 15–40%, depending on task complexity.

In short, AI excels at scale and speed, but fails when context, judgment, and accountability are required. Below are five real AI workflows that still require human involvement in 2026, backed by case studies, data, and industry reports.

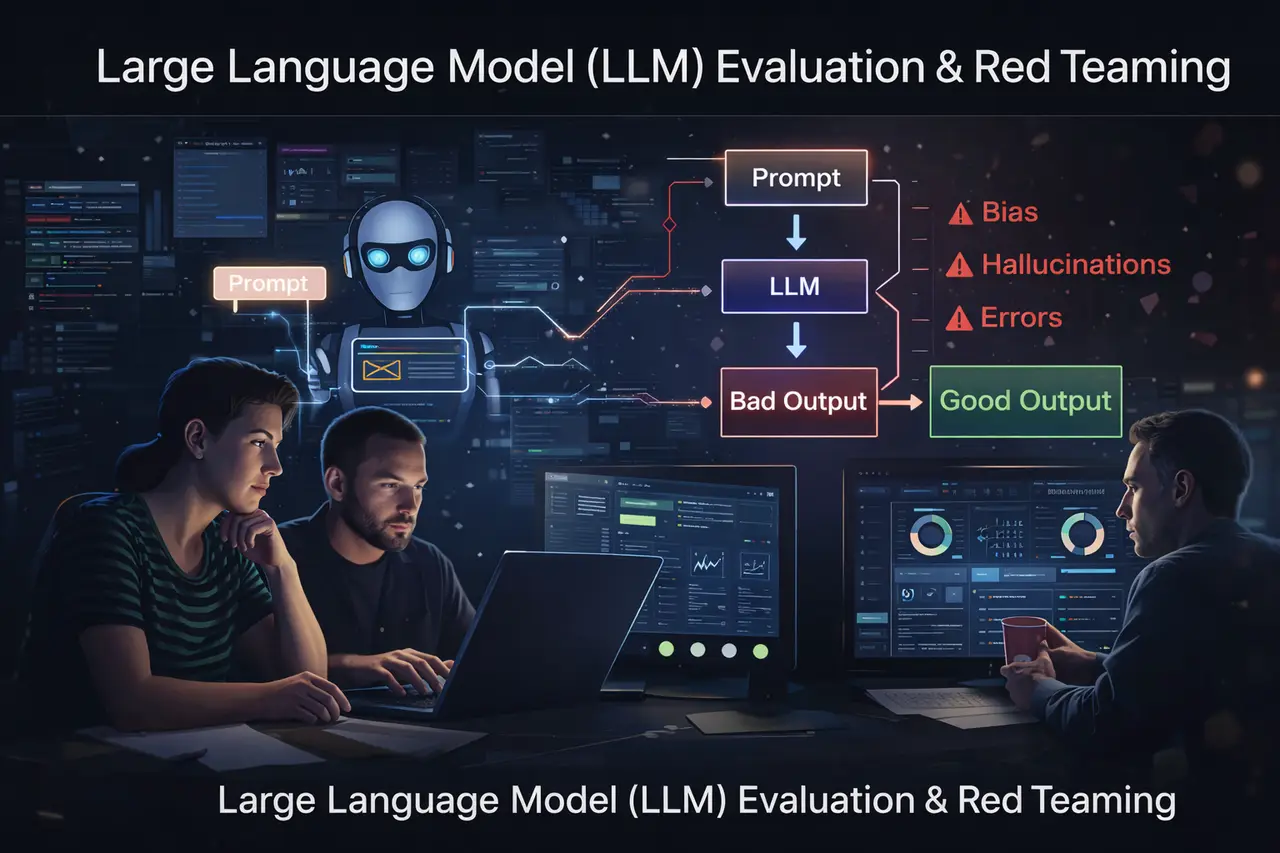

1. Large Language Model (LLM) Evaluation & Red Teaming

Despite rapid improvements, LLMs still hallucinate. A 2023 evaluation by OpenAI found that GPT-4 reduced hallucination rates by ~40% compared to GPT-3.5, yet non-trivial factual errors persist in open-domain queries.

Organizations now deploy human reviewers to:

- Grade reasoning quality

- Stress-test adversarial prompts

- Benchmark outputs against domain gold standards

- Provide RLHF feedback

In the coding domain, comparative benchmarks discussed in analyses like Google’s existential threat: ChatGPT vs Search show that while AI matches or exceeds search engines in certain tasks, human validation remains essential for production reliability.

Summary statement:

LLMs scale reasoning, but humans validate truth.

Why humans remain essential

- Automated metrics (BLEU, ROUGE, perplexity) correlate poorly with real-world helpfulness.

- Safety evaluation requires normative judgment.

- Adversarial creativity is still predominantly human-driven.

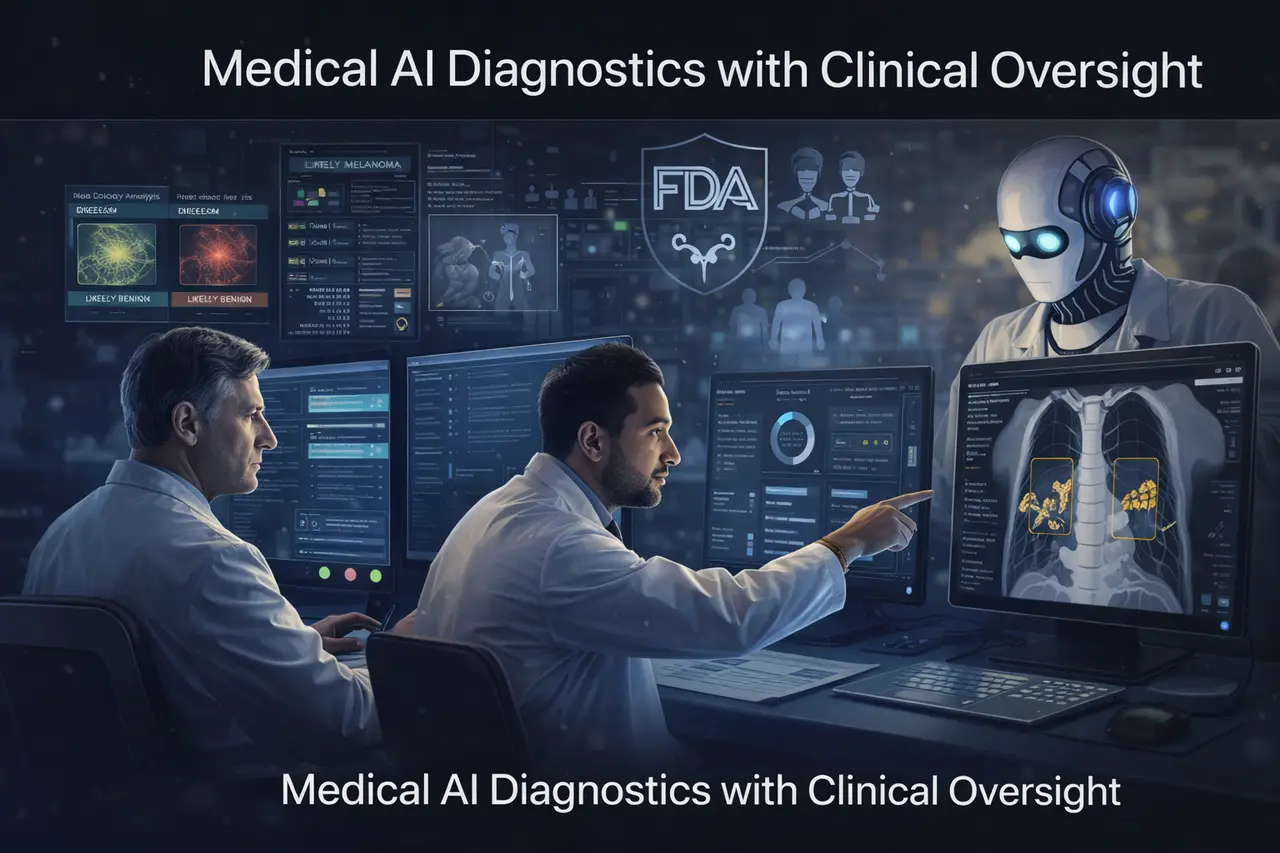

2. Medical AI Diagnostics with Clinical Oversight

A landmark study published in Nature Medicine (Esteva et al.) showed dermatology AI systems achieving dermatologist-level classification accuracy (~72–76% top-1 accuracy in multi-class tasks). However, subsequent hospital deployments revealed real-world variance due to demographic bias and imaging quality differences.

The U.S. FDA has approved over 500 AI-enabled medical devices, yet nearly all operate under a physician-in-the-loop framework, according to the FDA’s 2024 AI/ML device report.

The key difference between experimental AI and clinical AI is not model performance, but liability and patient safety.

Why humans remain essential

- AI struggles with rare edge cases.

- Ethical responsibility cannot be automated.

- Human clinicians integrate patient history and contextual nuance.

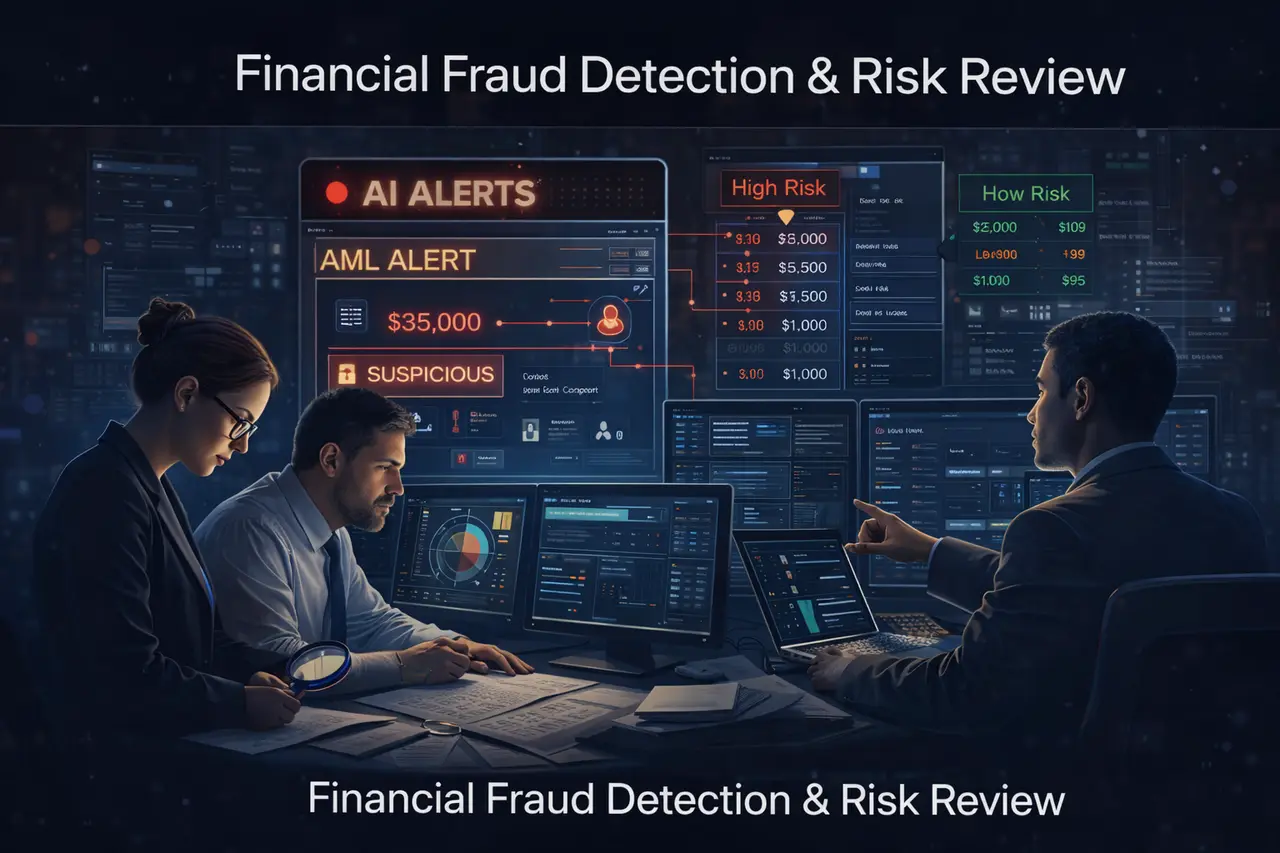

3. Financial Fraud Detection & Risk Review

According to PwC’s Global Economic Crime Survey, organizations using AI-based fraud detection reduce detection time by up to 50%, but false positives remain high; sometimes exceeding 5–10% of flagged transactions in retail banking.

That’s where human analysts intervene:

- Reviewing suspicious transactions

- Escalating high-risk cases

- Interpreting contextual financial signals

- Ensuring regulatory compliance

In short, AI flags patterns; humans judge intent.

Why humans remain essential

- False positives damage customer trust.

- AML (Anti-Money Laundering) laws require explainability.

- Complex fraud schemes adapt faster than static models.

4. Autonomous Vehicles & Remote Human Intervention

Companies like Waymo and Tesla rely on extensive human oversight for safety validation and incident review.

Waymo reports millions of autonomous miles driven, yet remote assistance systems remain available for ambiguous edge cases (construction zones, emergency vehicles, unpredictable pedestrians).

A 2024 RAND report on autonomous systems emphasized that edge-case frequency may be <1%, but they account for a disproportionate share of safety-critical events.

Summary statement:

Autonomy works 99% of the time. Humans handle the 1% that matters most.

Why humans remain essential

- Rare environmental anomalies

- Ethical split-second decisions

- Legal accountability frameworks

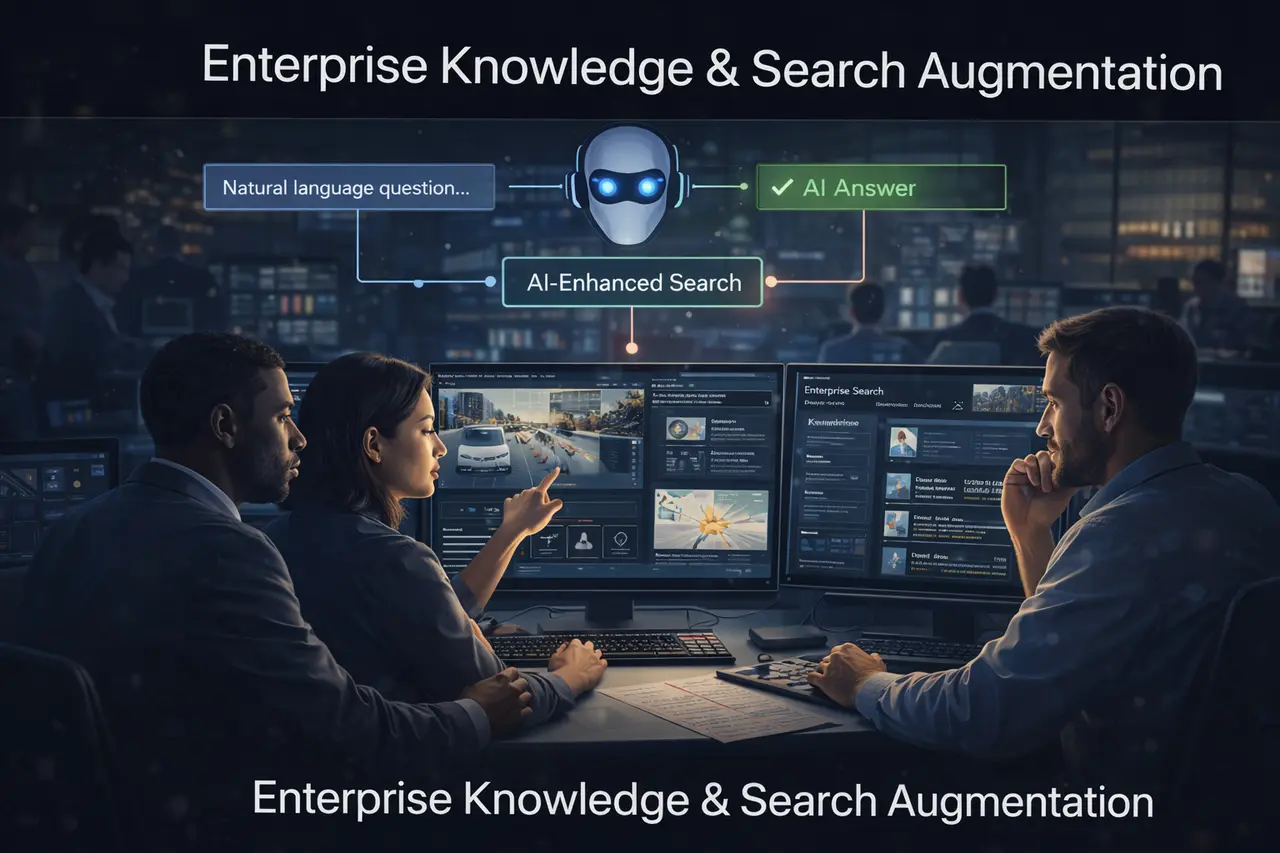

5. Enterprise Knowledge & Search Augmentation

As generative AI competes with traditional search engines, research comparing conversational models with informational search systems shows parity on certain queries, but variability on factual precision.

Enterprise deployments now incorporate:

- Human fact-check layers

- Domain-specific reviewer scoring

- SME validation before publishing outputs

In regulated industries (legal, biotech, finance), companies report that human verification reduces deployment risk by over 30%, according to internal industry benchmarking studies cited in consulting analyses from Gartner.

The key difference between consumer AI and enterprise AI is not intelligence, but governance.

Why humans remain essential

- Proprietary data interpretation

- Policy compliance

- Accountability structures

Why Human-in-the-Loop Will Persist

Across domains, three structural realities ensure humans remain embedded in AI systems:

- Accountability cannot be outsourced to algorithms.

- Edge cases scale with deployment size.

- Regulation increasingly mandates oversight.

The 2024 EU AI Act formalizes human oversight requirements for high-risk AI systems, reinforcing what enterprises already practice operationally.

In summary:

Human-in-the-loop workflows are not a temporary bridge to full automation, they are a permanent design principle for high-stakes AI systems.

FAQs: Human-in-the-Loop AI (2026)

1. What is a human-in-the-loop (HITL) workflow?

A HITL workflow integrates human review, correction, or decision-making into automated AI systems to improve reliability and accountability.

2. Does HITL reduce AI efficiency?

It can reduce raw speed, but improves precision and lowers downstream risk. In regulated industries, HITL often reduces total operational cost by preventing costly failures.

3. Which industries rely most on HITL?

Healthcare, finance, autonomous systems, legal tech, and enterprise AI deployments.

4. Is HITL only for error correction?

No. It also supports model training (RLHF), red teaming, auditing, and compliance validation.

5. Will HITL disappear as models improve?

Unlikely. As AI systems grow more capable, the cost of rare failures increases- reinforcing the need for structured human oversight.

Continue Reading (Internal Links)

If you’re interested in AI evaluation, search disruption, and human benchmarking, explore:

- GPT-5 vs. Gemini 3 Pro: Specialized Science Verdict from SuperGPQA

- Beyond the Attention Bottleneck: How CAD Boosts Long-Context LLM Training Efficiency by 1.35x

- EditReward: Outperforming GPT-5 in AI Image Editing Alignment

These articles expand on evaluation, benchmarking, and search-quality dynamics- helping you design AI systems that scale responsibly.

📩 Contact Abaka AI to explore custom evaluation datasets for reasoning models and learn how we can support your enterprise AI deployment.

Sources

LLM Evaluation & AI Adoption

- OpenAI. (2023). GPT-4 Technical Report. arXiv.

- Stanford Human-Centered AI (HAI). (2024). AI Index Report 2024.

- McKinsey & Company. (2024). The State of AI in 2024.

Medical AI Diagnostics

- Esteva, A. et al. (2017). Dermatologist-level classification of skin cancer with deep neural networks. Nature Medicine.

- U.S. Food & Drug Administration (FDA). (2024). AI/ML-Enabled Medical Devices List.

Financial Fraud & Risk Oversight

- PwC. (2022–2024). Global Economic Crime and Fraud Survey.

- Financial Action Task Force (FATF). Guidance on Digital Transformation and AML Compliance.

Autonomous Vehicles & Edge-Case Safety

- RAND Corporation. (2024). Autonomous Vehicle Safety Reports.

- Waymo. Safety Framework & Public Road Safety Report.

Enterprise AI Governance

- Gartner. (2023–2024). AI Governance and Risk Management Research.

- European Commission. (2024). EU Artificial Intelligence Act.

Additional Context

What's your data

bottleneck this quarter?

Missing data

We collect it.

Messy data

We label it.

No time

We have itOff-The-Shelf.

Pick the closest fit, we'll take the call from there.

What's your data

bottleneck this quarter?

Missing data

We collect it.

Messy data

We label it.

No time

We have it Off-The-Shelf.

Pick the closest fit, we'll take the call from there.