Artificial intelligence agents are advancing rapidly, yet many teams still rely on static benchmarks to evaluate progress. While benchmarks provide standardization and comparability, they struggle to capture the dynamic, uncertain environments where agents actually operate. In short, benchmarks measure performance under fixed conditions, while reinforcement learning (RL) environments evaluate behavior under change. That distinction is increasingly critical in modern AI systems.

Benchmarks vs. Environments: Scaling Agent Intelligence in AI

RL Environment vs Benchmark: Why Agent Training Needs More Than Static Eval

What Is a Benchmark vs an RL Environment?

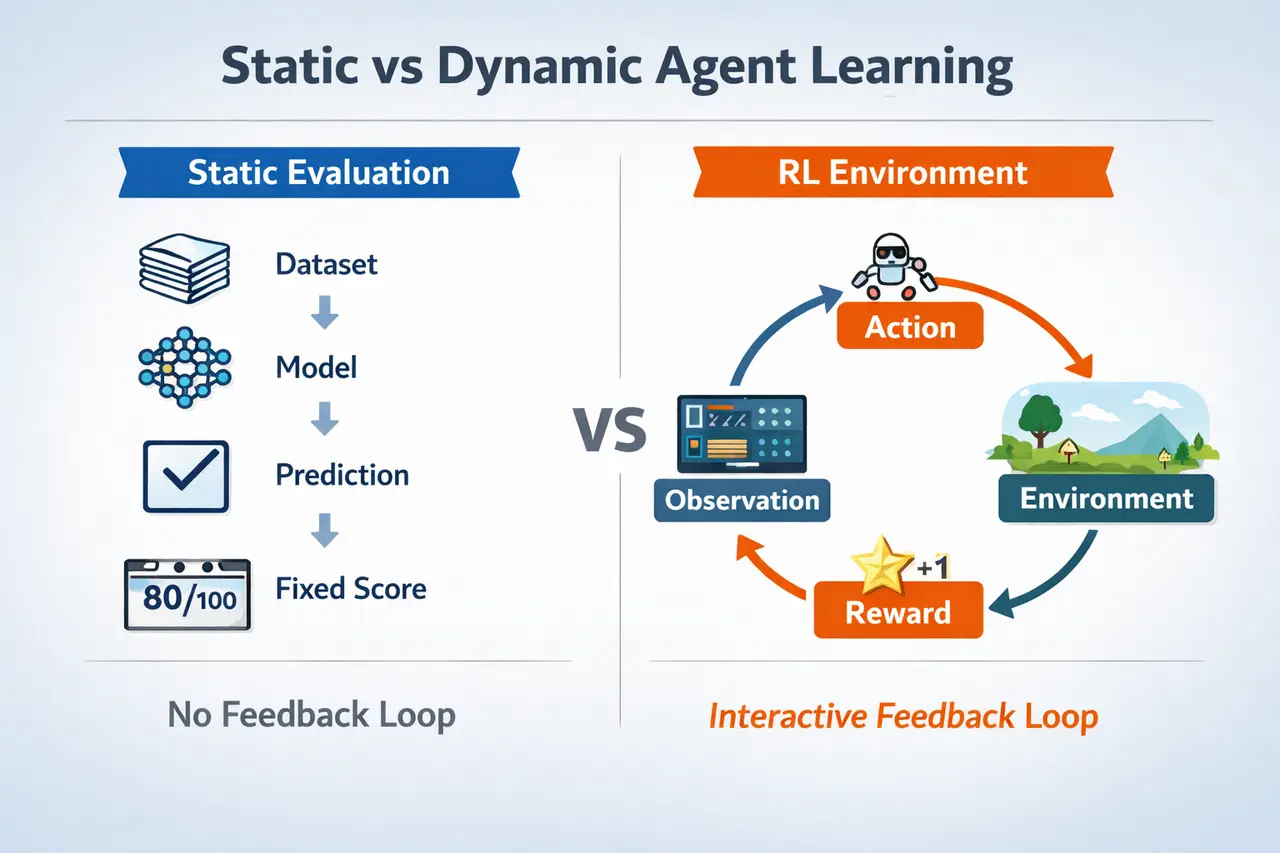

Benchmarks are standardized evaluation datasets with fixed inputs and expected outputs. Classic examples such as ImageNet and MMLU allow researchers to compare models under identical conditions, making them essential for reproducibility and progress tracking. However, most traditional benchmarks assume that the task distribution is static and that the model does not influence future inputs.

Reinforcement learning environments, by contrast, are interactive systems in which agents take actions, receive feedback, and influence future states. This paradigm has driven major breakthroughs in AI. For example, AlphaGo achieved superhuman performance not through static evaluation, but through reinforcement learning over millions of simulated games (Silver et al., 2016).

More recent work on agent evaluation reinforces this shift. Frameworks such as AgentBench (Liu et al., 2023) show that language models evaluated in interactive, multi-step settings often perform very differently than in static benchmarks, particularly on tasks requiring planning, tool use, and long-horizon reasoning.

Summary: Benchmarks evaluate outputs, while RL environments evaluate behavior.

Why Static Benchmarks Fall Short for Agents

Temporal Dynamics Are Missing

Most benchmarks evaluate single-step predictions, whereas real-world agents operate across sequences of decisions.

This limitation is evident across domains. In AlphaGo, performance gains came from optimizing long-term strategies rather than individual moves. Similarly, recent work on language agents shows that models can perform well on single-turn reasoning tasks yet struggle when required to plan across multiple steps or maintain consistency over time (Liu et al., 2023; Liang et al., 2022 – HELM).

Summary: Agents trained only on static benchmarks often struggle with long-horizon reasoning.

No Feedback Loop for Learning

Benchmarks do not inherently provide a mechanism for learning from consequences. Once a prediction is made, the evaluation ends.

RL environments, however, are built around feedback loops. Agents receive rewards or penalties, update their policies, and improve through interaction. This paradigm has been validated across domains, from robotics to game-playing systems.

For example, OpenAI’s work on dexterous in-hand manipulation demonstrated that policies trained in interactive simulation—combined with domain randomization—could successfully transfer to the real world despite significant physical variability (OpenAI et al., 2018; Tobin et al., 2017).

In short: Benchmarks test what a model knows, while environments train what an agent does.

Ignoring Distribution Shift

Many benchmarks assume that training and test data come from similar distributions. In real-world deployments, this assumption rarely holds.

Research on domain randomization shows that exposing agents to diverse and noisy environments during training significantly improves real-world robustness (Tobin et al., 2017). Similarly, broader evaluations such as the Stanford AI Index Report (2024) highlight that model performance can degrade substantially when systems are deployed outside benchmark conditions.

Efforts like BIG-Bench (Srivastava et al., 2022) and HELM (Liang et al., 2022) attempt to address this by expanding coverage, but they still lack true interactivity and feedback.

Summary: Benchmarks assume relative stability, while RL environments prepare agents for change.

Case Studies: Where RL Environments Outperform Benchmarks

The advantages of environment-based evaluation become clear across multiple domains.

Robotics:

OpenAI’s dexterous hand project showed that agents trained in simulation with domain randomization could transfer successfully to real-world tasks—something not achievable through static datasets alone.

Autonomous Systems:

Companies such as Waymo and Tesla rely heavily on simulation environments to expose driving systems to rare and safety-critical scenarios. These edge cases are difficult to capture in fixed datasets but essential for real-world performance.

Language Agents:

Recent agent frameworks (e.g., ReAct, tool-use agents, and AgentBench) demonstrate that reasoning, planning, and tool use improve significantly when models operate in interactive settings with feedback, rather than static prompt-response evaluations.

Key takeaway: Real-world capability emerges from interaction, not static evaluation.

Why You Need Both, But Not Equally

Benchmarks remain valuable. They enable quick comparisons, regression testing, and standardized progress tracking across models.

However, they are not sufficient for evaluating real-world capability. High benchmark scores do not guarantee robustness, adaptability, or effective long-term reasoning.

RL environments fill this gap by testing how agents behave over time, under uncertainty, and in response to feedback.

In short: Benchmarks are necessary for measurement, but environments are essential for capability.

Designing Better Evaluation Systems

The next generation of AI evaluation systems will combine both approaches. Static benchmarks can establish baseline competence, while interactive environments can stress-test agents in realistic conditions.

Recent research trends emphasize scaling not only data, but also environments—introducing stochasticity, diverse scenarios, and multi-step interaction. This shift is particularly important for agentic systems that must operate reliably in open-ended settings.

Summary: The key difference between benchmarks and environments is not just accuracy—but adaptability.

Key Takeaways

Benchmarks provide a controlled and standardized way to evaluate models, but they fall short in capturing dynamic, real-world behavior. Reinforcement learning environments enable agents to learn through interaction, adapt to changing conditions, and optimize long-term outcomes.

Final takeaway: Benchmarks measure performance, but environments build intelligence.

Further Reading

If you’re interested in AI evaluation, search disruption, and human benchmarking, explore:

- GPT-5 vs. Gemini 3 Pro: Specialized Science Verdict from SuperGPQA

- Beyond the Attention Bottleneck: How CAD Boosts Long-Context LLM Training Efficiency by 1.35x

- EditReward: Outperforming GPT-5 in AI Image Editing Alignment

These articles expand on evaluation, benchmarking, and search-quality dynamics- helping you design AI systems that scale responsibly.

📩 Contact Abaka AI to explore custom evaluation datasets for reasoning models and learn how we can support your enterprise AI deployment.

FAQs

- What is the main limitation of AI benchmarks?

They are static and do not capture interaction, feedback, or sequential decision-making. - Why are RL environments important for agents?

They allow agents to learn from consequences, adapt to change, and improve over time. - Can benchmarks and RL be used together?

Yes. Benchmarks provide baseline evaluation, while RL environments test real-world capability. - Do higher benchmark scores mean better agents?

Not necessarily. Many systems perform well on benchmarks but struggle in dynamic environments. - What is changing in AI evaluation today?

There is a growing shift toward interactive, multi-step, and environment-based evaluation frameworks.

Sources

Silver, David, et al. “Mastering the Game of Go with Deep Neural Networks and Tree Search.” Nature, vol. 529, no. 7587, 2016, pp. 484–489.

OpenAI, et al. “Learning Dexterous In-Hand Manipulation.” arXiv, 2018, https://arxiv.org/abs/1808.00177

Tobin, Josh, et al. “Domain Randomization for Transferring Deep Neural Networks from Simulation to the Real World.” IROS, 2017, https://arxiv.org/abs/1703.06907

Stanford Institute for Human-Centered Artificial Intelligence. AI Index Report 2024. Stanford University, 2024, https://aiindex.stanford.edu/report/

Liu, Xiao, et al. “AgentBench: Evaluating LLMs as Agents.” arXiv, 2023, https://arxiv.org/abs/2308.03688

Liang, Percy, et al. “Holistic Evaluation of Language Models (HELM).” arXiv, 2022, https://arxiv.org/abs/2211.09110

Srivastava, Aarohi, et al. “Beyond the Imitation Game: Quantifying and Extrapolating the Capabilities of Language Models (BIG-Bench).” arXiv, 2022, https://arxiv.org/abs/2206.04615

What's your data

bottleneck this quarter?

Missing data

We collect it.

Messy data

We label it.

No time

We have itOff-The-Shelf.

Pick the closest fit, we'll take the call from there.

What's your data

bottleneck this quarter?

Missing data

We collect it.

Messy data

We label it.

No time

We have it Off-The-Shelf.

Pick the closest fit, we'll take the call from there.