AI can now turn live, working code into fully editable Figma designs—finally closing the loop between prompt-generated UI and real product decisions.

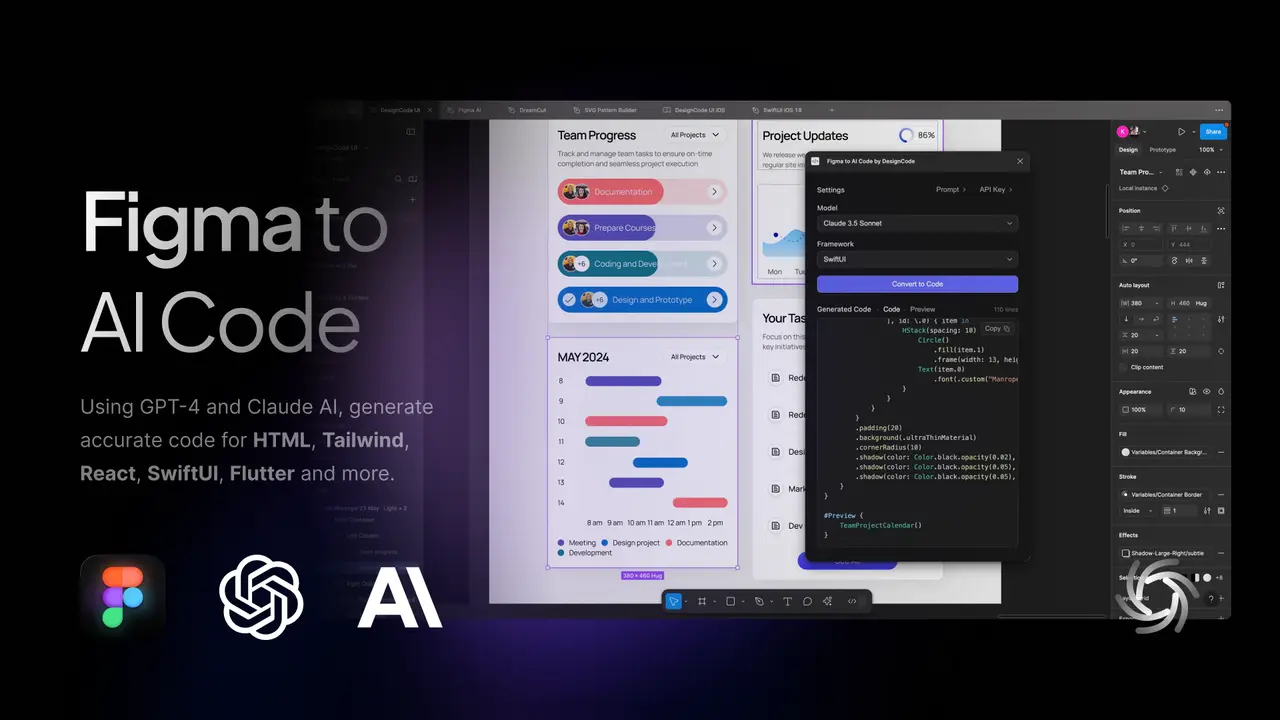

Claude Code x Figma: How to Turn AI-Generated UI into Editable Designs

Claude × Figma: How Claude Code Now Turns AI-Generated UI Into Editable Figma Designs

But one major bottleneck remained: editability.

AI-generated interfaces often arrive as static images, loosely structured components, or non-semantic code. As much as these are all helpful, still designers can’t easily tweak layouts, engineers struggle to integrate outputs into design systems, and product teams are forced to manually reconstruct generated interfaces inside Figma before they can move forward.

Now, that workflow is beginning to change.

With the introduction of Claude Code and its integration with Figma’s new capture-to-canvas workflow, teams can generate interface code and translate live browser-based UI directly into editable Figma design files—effectively turning AI-generated UI into something that designers can actually work with (Figma, 2026).

The Real Bottleneck: Choosing What to Ship

AI coding tools have made it trivially easy to go from idea to working prototype. You describe what you want, and you get a functioning interface in minutes.

It's not that the bottleneck has disappeared, it has just moved.

It is no longer “How do we build this?” but rather: “How do we decide which version to ship?”

That decision process typically lives on the canvas, which can be found inside tools like Figma. This is where teams compare design options side by side, leave comments, and align before committing to a direction (Muzli, 2026). Until recently, there was no clean way to bring a coded prototype back into that collaborative decision space without screenshots, screen recordings, or requiring teammates to run local builds.

As UI grows beyond a single screen, solo code-first exploration can become a constraint. Requesting feedback often means introducing friction at the exact moment the team would benefit from going wider and exploring more options together.

From Code to Canvas: How the Integration Works

Figma’s partnership with Anthropic addresses this gap by enabling teams to capture running UI directly from the browser and convert it into Figma-compatible frames (Seeking Alpha, 2026).

The workflow typically follows four steps:

- Build or iterate on a UI using Claude Code in a local dev server, staging environment, or production browser session.

- Capture the screen using the integration, which grabs the live browser state.

- Paste into Figma, where the captured screen lands as an editable design artifact—not a flat image, but a real frame.

- Collaborate directly on the canvas by annotating, duplicating, rearranging, and comparing options without requiring code access.

Teams can do more than just view the UI (Figma, 2026). They can annotate what’s working, call out what’s unclear, and explore divergent ideas without needing to context switch into a new environment or edit multiple code files.

Users can also capture multiple screens in a single session in order to enable faster creation and more in-depth design exploration, such as onboarding flows, checkout journeys, or settings pages (Figma, 2026). Entire flows can be preserved in sequence on the canvas, allowing teams to compare variants, test layout changes, and keep rejected ideas visible for future reference.

Collaboration Happens on the Canvas

Once a coded UI lands inside Figma, designers and product stakeholders can work in their native environment.

Multiple AI-generated variants can be placed side by side to identify patterns, gaps, or inconsistencies across flows (Muzli, 2026). Frames can be duplicated and rearranged to test structural changes without modifying code. Comments can be left on fully built interfaces rather than approximations. This ensures that PMs, designers, and engineers react to the same artifact at the same level of fidelity.

Design system alignment becomes easier as well. Teams can evaluate whether AI-generated UI matches existing components, tokens, and layout patterns before those inconsistencies reach production.

This is where the "modern designer" differs from the traditional type. In this workflow, the designer’s role begins to shift. When AI generates multiple variants in minutes, the bottleneck now becomes choosing—and the canvas is where choosing happens.

The Return Trip: Canvas Back to Code

The reverse direction matters just as much.

Teams can select a frame in Figma and prompt Claude Code with a link to generate production-ready code that respects their design system. This includes reading component structures, tokens, and styling variables to produce implementation that aligns with system standards.

This creates a true round-trip workflow (Muzli, 2026):

Design in Figma → Generate code with Claude → Capture working UI back to Figma → Refine on canvas → Push updates back to code

Each cycle preserves context. Nothing gets lost in translation because both environments share a connected system of record. For teams working with AI design tools, this is one of the first practical closed loops between design and development.

From Interface Iteration to Structured Design Data

There is also a less obvious implication: data.

Each AI-generated interface that is refined inside Figma becomes structured design data, which contains information about layout relationships, component hierarchies, and interaction patterns. Over time, these edited interfaces can serve as training or evaluation data for downstream design-to-code systems, multimodal agents, or UI-generation models.

As AI-assisted design becomes more prevalent, teams may find themselves managing growing repositories of structured UI data that require annotation, classification of component usage, interface evaluation, and metadata enrichment for model training. This introduces new challenges around data quality, consistency, and scalability, particularly for organizations building internal AI tooling.

Abaka AI helps organizations curate, annotate, and evaluate complex multimodal datasets—including UI layouts, interface components, and interaction patterns, which allows teams to build more accurate design-aware AI systems.

Whether you're training UI-generation models or evaluating agent-driven product workflows, Abaka provides scalable data pipelines to support production AI environments.

Get in touch to learn how Abaka can support your AI design workflows.

Frequently Asked Questions (FAQ)

Can Claude generate UI designs directly in Figma?

Claude Code can generate structured UI representations that can be captured from a running browser session and pasted into Figma as editable frames, enabling designers to refine layouts without rebuilding generated interfaces manually.

What is AI-generated UI?

AI-generated UI refers to user interface layouts or components created automatically by machine learning models based on prompts, specifications, or behavioral data rather than manual design processes.

Why is editable UI important for AI design tools?

Editable outputs allow designers to refine generated interfaces, align them with design systems, and collaborate with engineers without recreating UI elements from scratch.

Can AI-generated interfaces be used as training data?

Yes. Edited AI-generated interfaces can be used as structured datasets for training or evaluating multimodal AI systems that generate layouts, predict component usage, or assist in product design workflows.

Explore More from Abaka AI

👉 Contact Us – Learn more about how world models and interactive systems are evaluated.

👉 Explore Our Blog – Read research and articles on embodied AI datasets, multimodal alignment, simulation grounded data, and evaluation beyond appearance alone.

👉 Follow Our Updates – Get insights from Abaka AI on real-world robotics research, agent evaluation workflows, and emerging standards for interactive AI systems.

👉 Read Our FAQs – See how teams design datasets and evaluation frameworks for systems that must act, adapt, and remain consistent over time.

Related Reads from Abaka AI

- GPT-5 vs. Gemini 3 Pro: Specialized Science Verdict from SuperGPQA

- Google’s Project Genie Turns Photos Into Playable Worlds (With Gemini 3)

- Best Datasets for Math in 2026

- EditReward: Outperforming GPT-5 in AI Image Editing Alignment

Sources

- https://seekingalpha.com/news/4552544-figma-teams-up-with-anthropic-to-convert-ai-code-into-designs

- https://muz.li/blog/claude-code-to-figma-how-the-new-code-to-canvas-integration-works/

- https://www.figma.com/blog/introducing-claude-code-to-figma/

What's your data

bottleneck this quarter?

Missing data

We collect it.

Messy data

We label it.

No time

We have itOff-The-Shelf.

Pick the closest fit, we'll take the call from there.

What's your data

bottleneck this quarter?

Missing data

We collect it.

Messy data

We label it.

No time

We have it Off-The-Shelf.

Pick the closest fit, we'll take the call from there.