To build effective training data for terminal-native agents, teams must create executable, verifiable, and task complete coding environments with clear evaluation criteria. AB-Terminal Bench achieves this through containerized tasks, pytest-based verification, oracle solutions, and multi-stage agent pipelines. In practice, structured task design and evaluation rigor, not raw data scale, determine whether coding agents improve after training.

Terminal Agent Training Data: How AB-Terminal Bench Improves Coding AI

AB-Terminal Bench: Training Data for Terminal-Native Agents

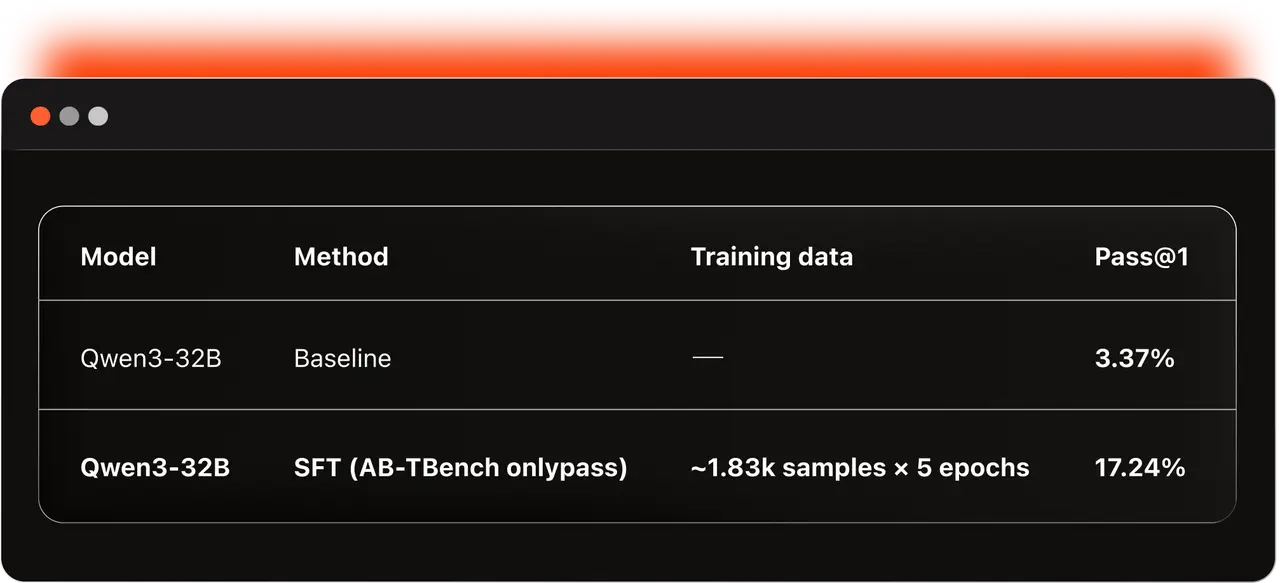

AB-Terminal Bench is a post-training corpus of containerized terminal-coding tasks, produced through an agent-driven pipeline for training and evaluating agentic coding systems. A single round of supervised fine-tuning on 1.8k filtered samples moves Qwen3-32B from 3.37% to 17.24% pass@1 on a 120-task held-out eval slice under the Terminus-2 harness.

The field is moving fast enough that this kind of data is becoming the rate-limiter. On the current Vals AI leaderboard, Claude Opus 4.7, Gemini 3.1 Pro Preview, and GPT-5.3 Codex sit at 68%, 67%, and 64% pass@1 on Terminal-Bench 2.0. Eighteen months ago, the same family of coding agents was below 15% on comparable tasks. METR's March 2025 analysis reported agent task-completion horizons doubling roughly every four months through 2024–2025. Most of the gain comes from post-training: more task variety, better tool-use trajectories, reinforcement learning on verifier-graded rollouts.

AB-Terminal Bench is independent from the official Terminal-Bench benchmark. It is produced internally at Abaka, but follows the Terminal-Bench taxonomy and Harbor-compatible runtime conventions because that stack has become the reference standard for terminal-agent work. Each task includes a self-contained Docker environment, a natural-language brief, a pytest-based verifier, and an oracle solution that proves the task is solvable. Where tasks derive from open-source materials such as GitHub Issues, pull requests, or Kaggle notebooks, we preserve attribution and apply source-specific license review before inclusion.

The corpus spans five categories of engineering work that together cover most of what a software engineer does day-to-day:

- Bugfix — locate and patch a defect inside an unfamiliar repository

- New Feature — understand existing architecture and extend it

- Performance Optimization — find a bottleneck and improve a measurable target

- ML / Data Science — end-to-end modeling against a metric threshold

- ETL / Data Processing — build a deterministic pipeline with schema and value-level guarantees

Task example

Below is a task from the ML/DS category, derived from a Kaggle materials-science competition. The agent receives a dataset of material structures (a train.json with labeled rows and test.json without) and is asked to train a regression model predicting a numeric property called hform.

Task brief excerpt. Predict the target columnhform. Use an 80/20 train/validation split withrandom_state=42. Feature pipeline must be deterministic and identical for train and test. Validation RMSE must be ≤ 0.20. Writesubmission.csv, avalid.txtcontaining the RMSE, and amain.pythat runs the full pipeline end-to-end.

The verifier is a pytest suite that encodes the brief's acceptance criteria. It runs 12 assertions on the agent's response, including these four:

- Schema alignment.

submission.csvmust contain exactly[id, hform]in that column order, with the same row count and row ordering astest.json. Weak agents often produce outputs that look plausible but swap columns or misalign rows. - Numeric validity. Every prediction has to be a finite number. Catches agents whose model silently returns

NaNon some test rows. - Accuracy threshold. The RMSE in

valid.txtis ≤ 0.20. A fixed acceptance bar, not grade-on-a-curve. - Reproducibility.

main.pymust run standalone inside the container and produce a bit-identical submission on a second run. Catches notebook-style solutions with hidden environment dependencies or nondeterministic training.

Four categories of check, covering four distinct classes of failure. A strong model can write a plausible task brief in one shot. Writing the full rubric, and getting every assertion to actually fail on the agents it should fail on, is the harder part. Across the corpus, Hard-tier tasks like this one typically carry 10 or more independent checks per task; easier tasks carry fewer, but the shape is the same: multiple assertions, covering distinct failure modes.

Building a task

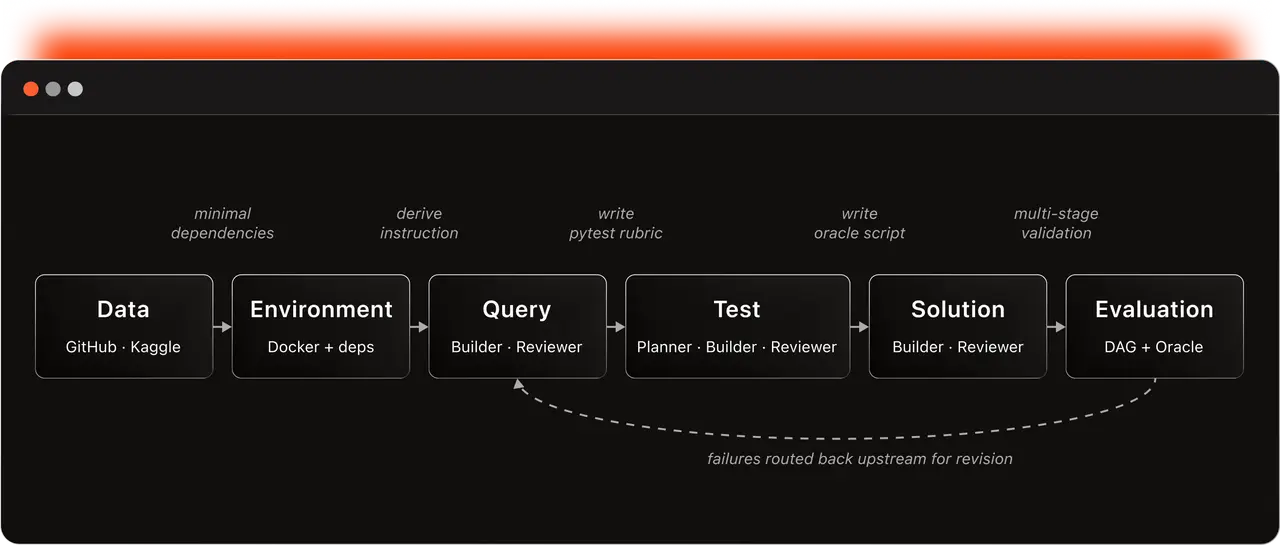

The pipeline has five stages, each producing one artifact of the final task.

Environment parses the source material (a Kaggle notebook with its dataset, a GitHub Issue/PR pair, or an algorithm repository) and produces a minimal Dockerfile plus the data bundle the agent will need. Query turns the source material into the natural-language instruction and task metadata. Test produces the pytest rubric. Solution produces the oracle script. Evaluation validates that the completed candidate works as a coherent task.

Each of the first four stages runs a small chain of agents: a Builder that drafts the artifact, then a Reviewer that checks it against a rubric specific to that stage. Tests get an extra Planner stage because they are the most failure-prone output. Without a Planner to decompose what needs to be verifiable, a Builder tends to write assertions that match its own mental model of the task rather than the task's actual specification.

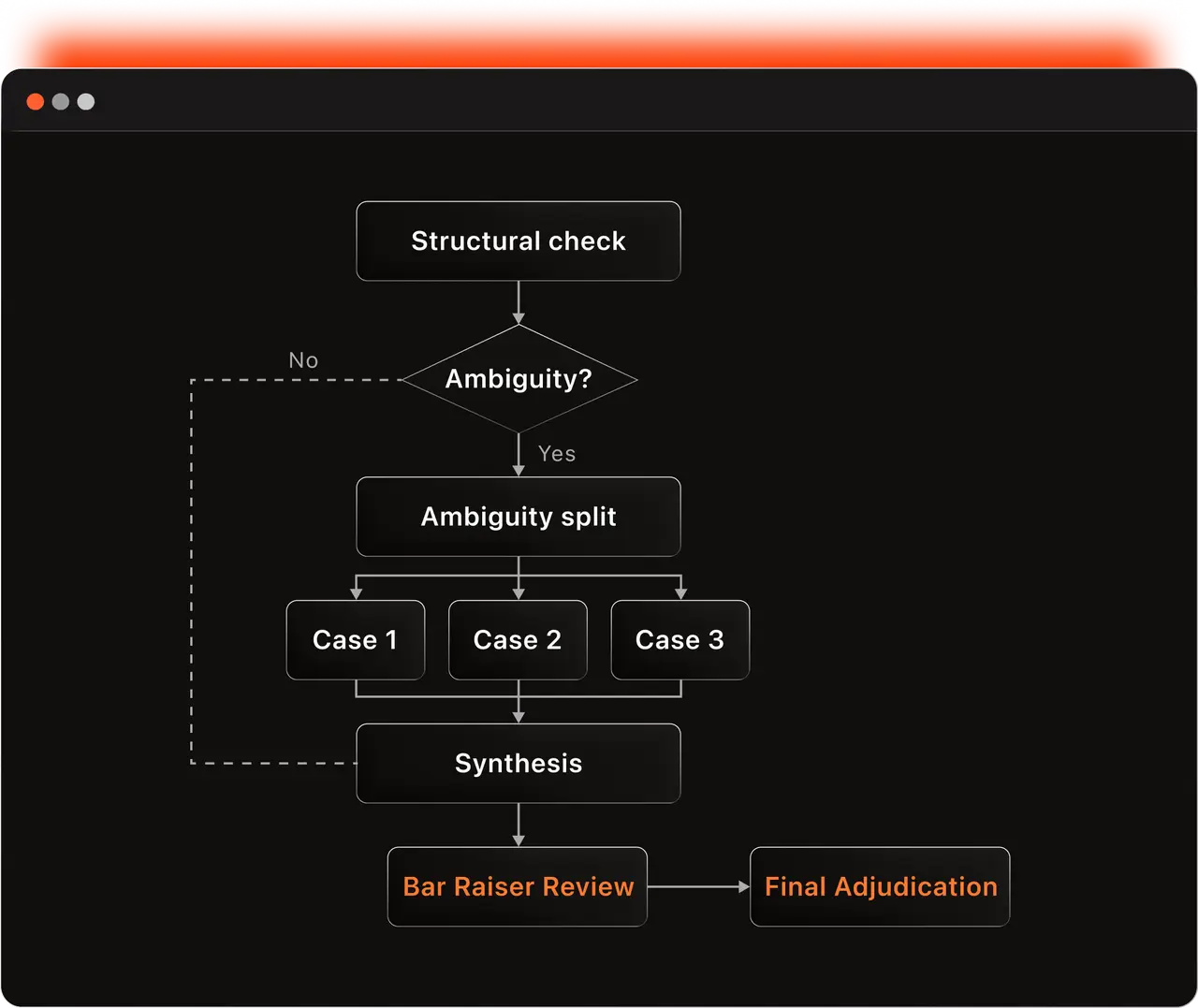

Evaluation is where the pipeline becomes adversarial, and it runs in two stages. First, a multi-agent flow checks that Environment, Query, Test, and Solution agree with each other and with the source material's intent. It looks for structural mismatches (instructions that reference files not in the environment; tests that expect outputs the solution does not produce), ambiguity (tasks with two valid interpretations that would each produce different graded outputs), and intent drift (tasks internally consistent but subtly off from the source's intent). The Bar Raiser review in the figure is the adversarial final pass whose job is to catch cases earlier reviewers let through.

Humans do not manually review every task. They refine the rubrics, audit sampled outputs, and inspect suspicious failures. The task-by-task reviewing itself is handled by specialized reviewer agents.

Signal quality: task-level discrimination

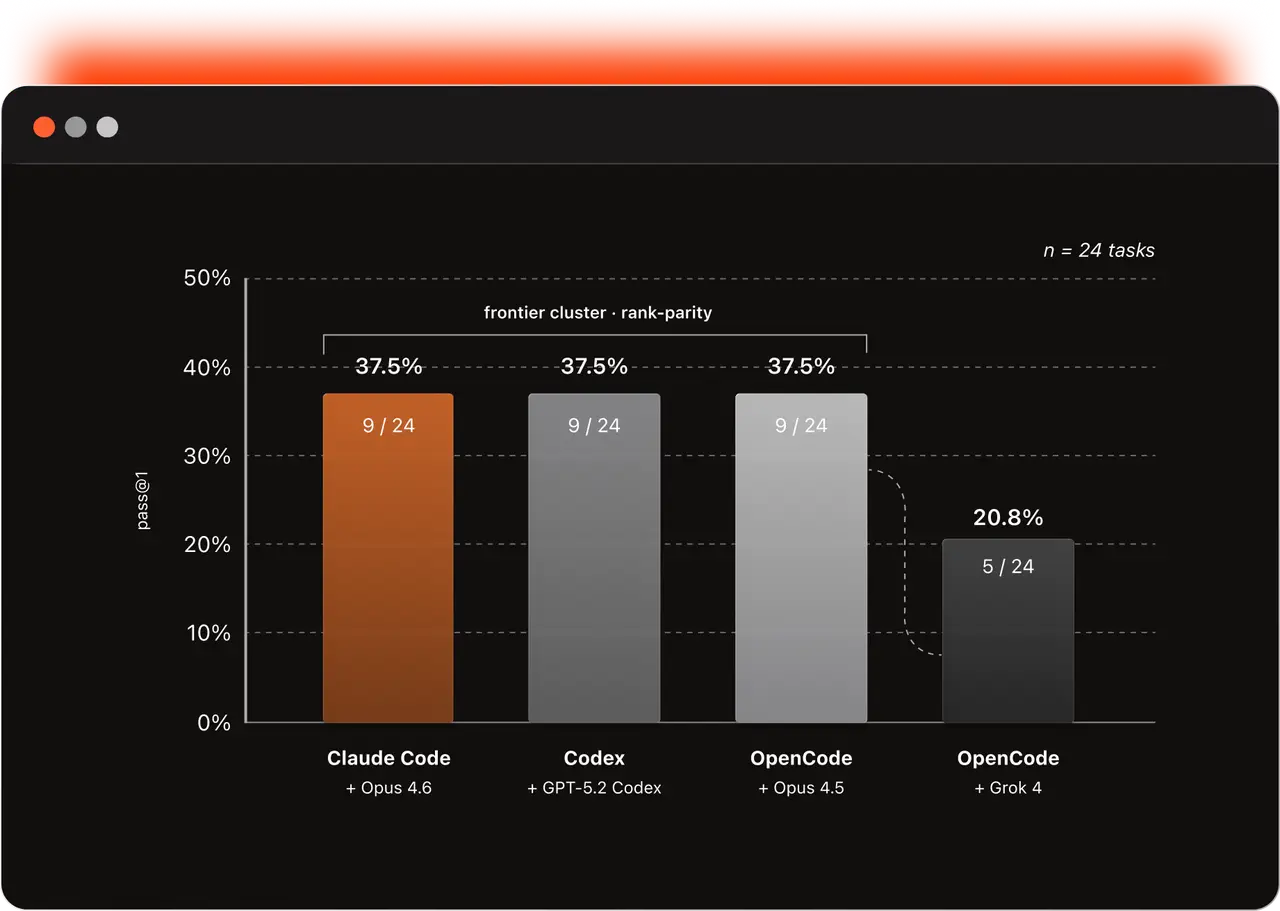

Before using the corpus for post-training, we first checked whether it exhibited a reasonable difficulty profile. A useful training dataset should separate stronger agents from weaker ones. It is most informative when it avoids collapsing stronger systems into the same score, and retains enough headroom that weaker systems do not already pass most tasks. To assess this, we ran four agent stacks (agentic CLI + model) on a 24-task diagnostic slice, pass@1:

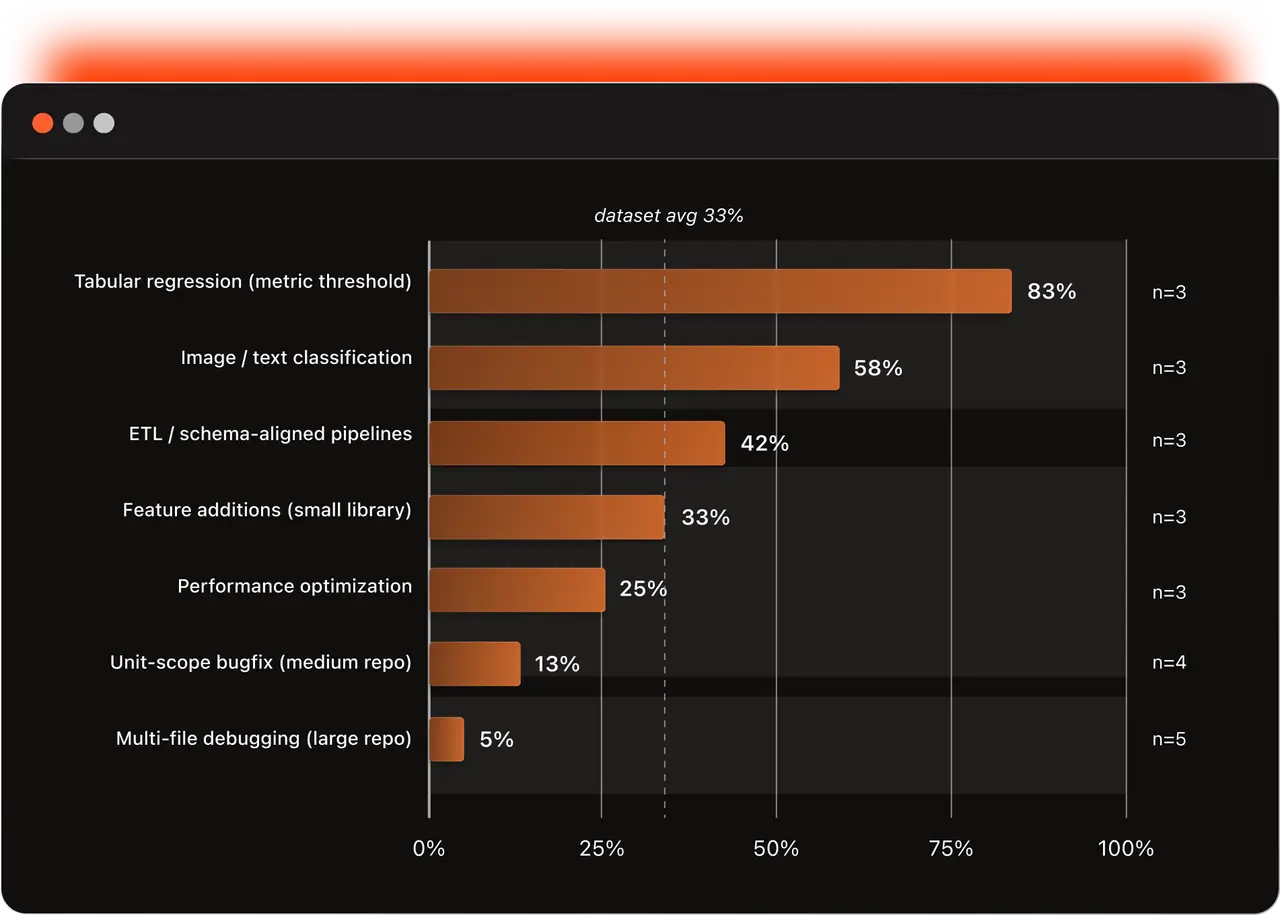

Grouping the 24 tasks by category shows where the discrimination comes from:

Some categories are clearly easy: tabular regression and classification tasks pass on most stacks. At the hard end, multi-file bugfixes in large unfamiliar codebases sit near zero across all four systems. Frontier agents are competitive on structured prediction tasks, but still struggle to localize and fix bugs without prior context. The middle band is where post-training signal lives: ETL tasks, smaller library changes, and some performance optimization problems are neither trivial nor uniformly unsolved. These are the tasks most likely to separate systems and show measurable gains from post-training.

These diagnostics were run during early corpus calibration. We then fixed a 120-task held-out slice (24 per category across the five categories, disjoint from the training pool) as the evaluation set for every post-training number that follows.

Post-training on Qwen3-32B

One round of supervised fine-tuning on Qwen3-32B, trained on a oracle-pass filtered subset of AB-Terminal Bench (tasks whose oracle solution cleanly passes the full pytest rubric and whose generation cleared every pipeline stage).

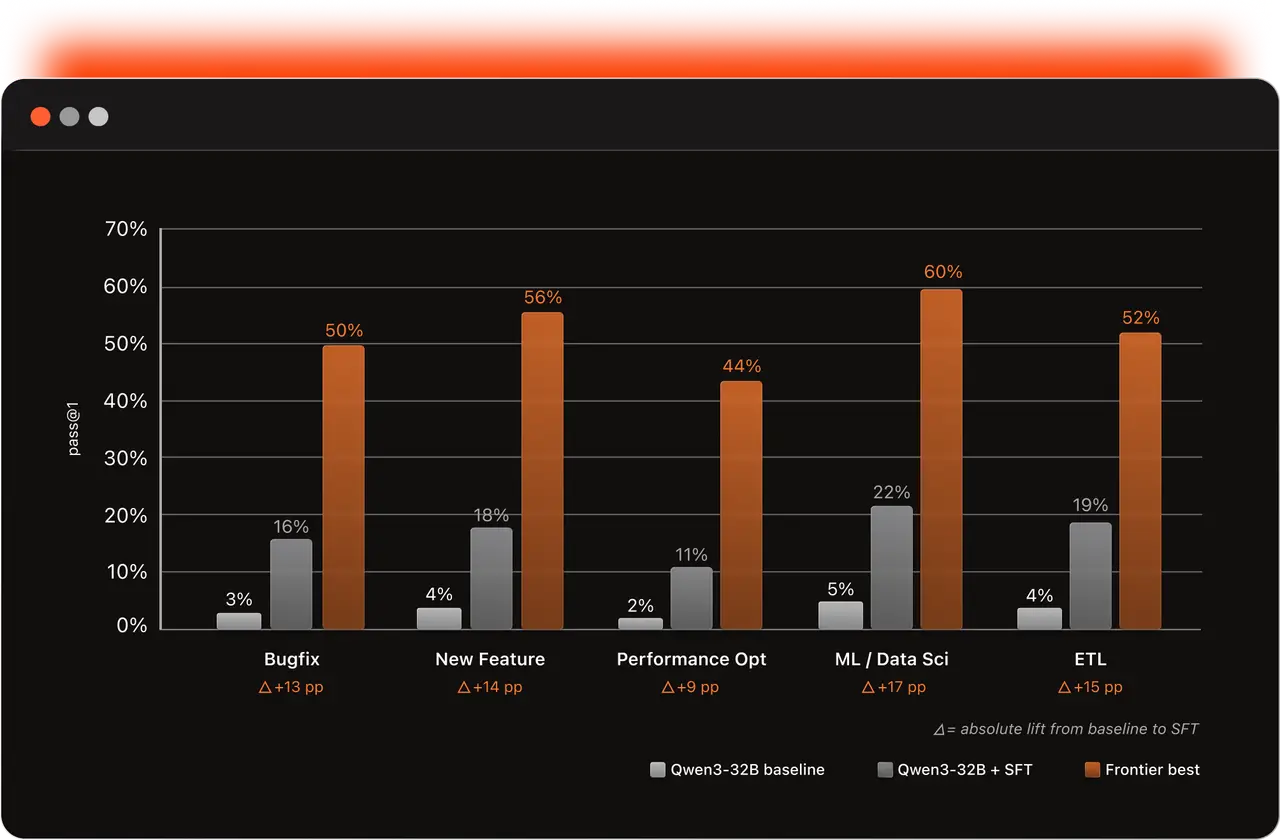

The gains are not uniform across categories.

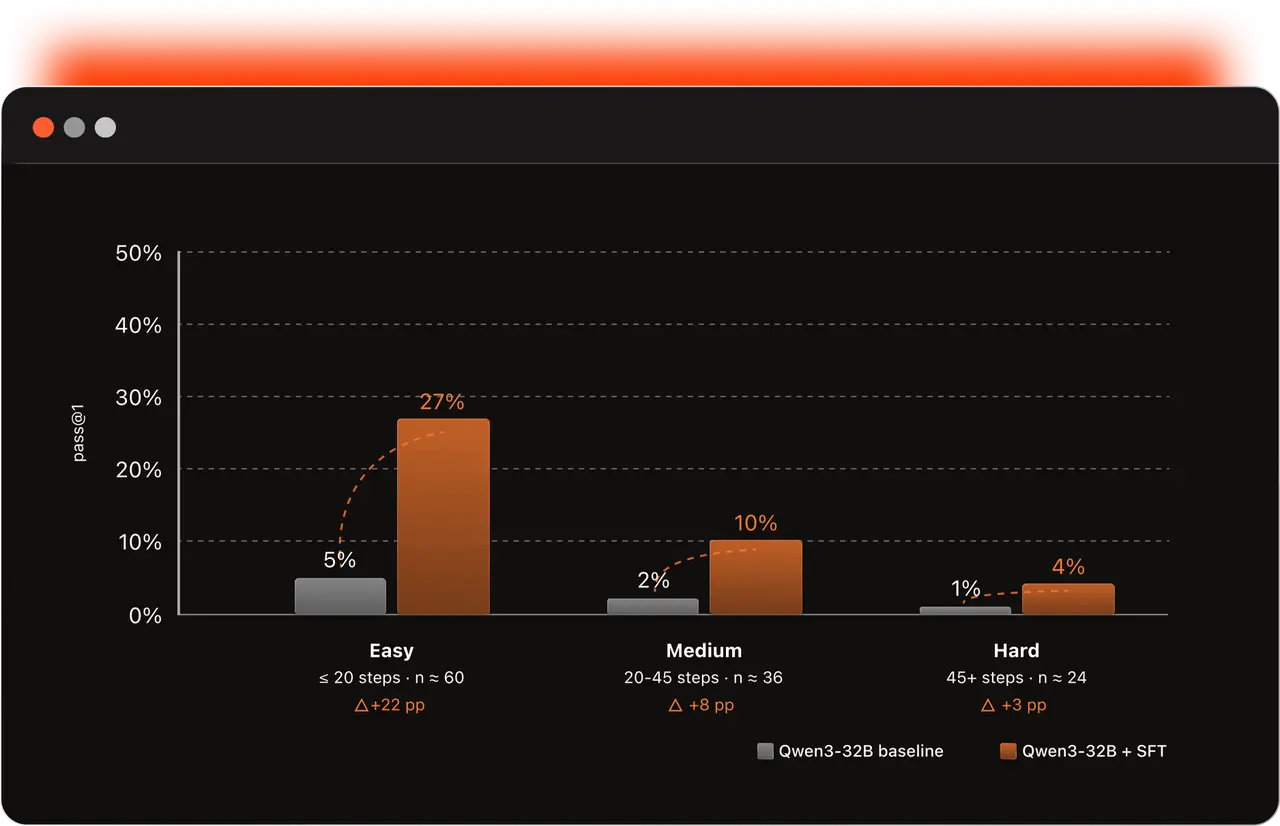

The per-difficulty breakdown confirms the picture.

Closing

AB-Terminal Bench is ultimately an attempt to turn task quality from something anecdotal into something procedural. The hard part is not generating plausible instructions, but building tasks that are executable, verifiable, solvable, and discriminative at the same time. It takes a pipeline that can decompose, check, and reject at every stage before a task ever reaches training. As agent capability becomes bottlenecked by post-training data, manufacturing tasks at this standard is the infrastructure problem that matters.

Currently, we use the corpus internally for our own SFT and RL experiments. If this shape of data fits what you are building, contact Abaka.

- AB-Terminal Bench is an independent Abaka dataset. Not affiliated with Terminal-Bench, Stanford University, or the Laude Institute.

- All numbers are pass@1. Qwen3-32B pass@1 is averaged across seeds under the Terminus-2 harness.

References:

- Terminal-Bench — terminal-coding agent benchmark (Stanford + Laude Institute).

- Harbor — containerized runtime for Terminal-Bench (Laude Institute).

- Vals AI leaderboard — frontier model rankings on Terminal-Bench 2.0.

- Measuring AI Ability to Complete Long Tasks — METR, March 2025. Reports a 7-month doubling time on agent task horizons over 2019–2025, accelerating to ~4 months in 2024–2025.

- SWE-Bench Verified — human-validated subset of SWE-Bench used as a real-tasks reference.

FAQ

What is AB-Terminal Bench?

AB-Terminal Bench is a structured dataset of containerized coding tasks designed to train and evaluate terminal-based AI agents. Each task includes a runnable environment, instructions, verification tests, and a ground-truth solution.

Why are terminal-based datasets important for AI agents?

Terminal environments reflect real-world engineering workflows. They require agents to execute code, manage files, debug systems, and meet strict constraints, making them essential for training practical coding agents.

What makes a high-quality agent training dataset?

High-quality datasets must be executable, verifiable, and discriminative. This means tasks must run end-to-end, include objective evaluation criteria, and be difficult enough to differentiate between models.

How does AB-Terminal Bench improve model performance?

Even a single round of supervised fine-tuning on curated tasks can significantly improve agent performance, as the dataset provides structured reasoning, tool-use trajectories, and precise evaluation signals.