The primary difference between structured and unstructured data lies in their schema: structured data follows a rigid, tabular format (SQL), while unstructured data (text, images, video) lacks a predefined model and accounts for 90% of enterprise data. For Machine Learning pipelines, this distinction dictates everything—from the shift from manual feature engineering to vector embeddings, to the requirement for high-performance GPU computing and specialized human-in-the-loop annotation for unstructured datasets.

Structured vs Unstructured Data in Machine Learning: How Each One Breaks Your Pipeline

How Structured and Unstructured Data Affect Machine Learning Pipelines Differently

In the modern AI landscape, data is no longer just "numbers in a spreadsheet." As enterprises race to deploy Generative AI and Computer Vision, the architecture of the Machine Learning (ML) pipeline must evolve to handle two fundamentally different types of fuel: Structured and Unstructured data.

Understanding these differences is the key to reducing technical debt and accelerating time-to-market for your AI products.

The Anatomy of Data: Rows vs. Raw Content

Structured Data: The Predictable Backbone

Structured data is highly organized and formatted in a way that is easily searchable by traditional algorithms.

- Examples: Financial transactions, CRM records, inventory logs.

- Storage: Relational databases (RDBMS) like PostgreSQL or data warehouses like Snowflake.

Unstructured Data: The Untapped Goldmine

Unstructured data is information that doesn't fit into a pre-defined data model. It is diverse, massive in scale, and rich in context.

- Examples: Customer support emails, medical X-rays, Zoom recordings, LiDAR point clouds.

- Storage: Data Lakes or NoSQL databases like MongoDB and Azure Blob Storage.

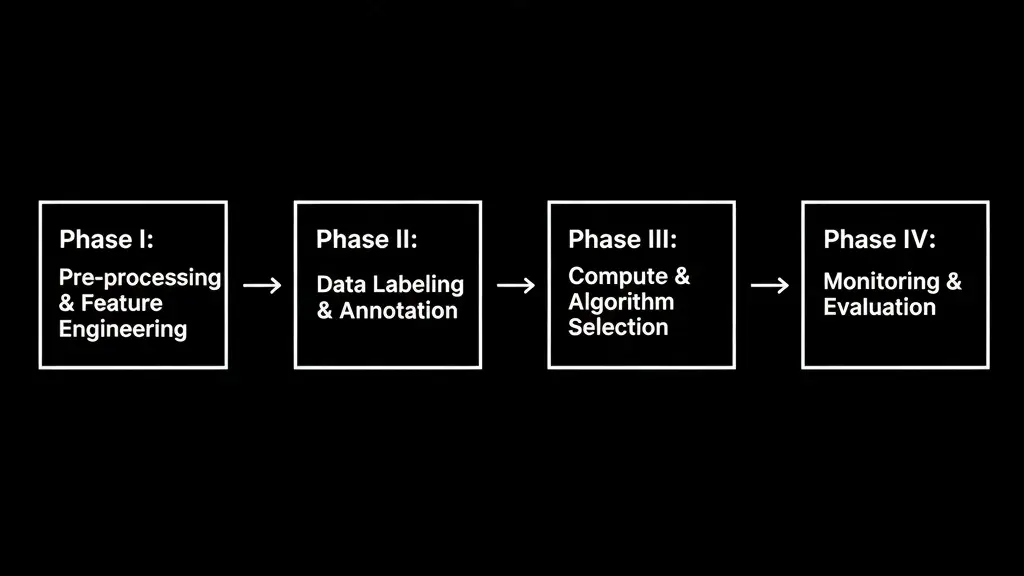

Impact on the ML Pipeline: A Comparative Deep Dive

The choice of data type ripples through every stage of your ML pipeline. Here is how they differ across the four critical phases:

Phase I: Pre-processing & Feature Engineering

- Structured Pipeline: Success depends on Feature Engineering. Data scientists spend weeks transforming raw columns into meaningful inputs (e.g., calculating "customer lifetime value" from purchase history).

- Unstructured Pipeline: Modern pipelines rely on Representation Learning. Instead of manual features, we use Embeddings—converting raw images or text into high-dimensional vectors. These vectors capture semantic meaning, allowing the model to "understand" that a photo of a cat and the word "feline" are related.

Phase II: Data Labeling & Annotation

- Structured: Labeling is often "implicit." For instance, a "churn" label is automatically generated if a user cancels their subscription.

- Unstructured: This is where the bottleneck occurs. Unstructured data requires Human-in-the-Loop (HITL) annotation. Whether it’s bounding boxes for autonomous driving or Named Entity Recognition (NER) for legal docs, you need a robust platform like Abaka AI to manage high-quality labeling at scale.

Phase III: Compute & Algorithm Selection

- Structured: You can often achieve state-of-the-art results using Tree-based models (XGBoost, LightGBM) on standard CPUs. Training is fast and cost-effective.

- Unstructured: This is the domain of Deep Learning (Transformers, CNNs). These models require massive GPU/TPU clusters. To manage costs, pipelines often use Transfer Learning, taking a pre-trained model (like GPT-4 or ResNet) and fine-tuning it on specific enterprise data.

Phase IV: Monitoring & Evaluation

- Structured: Monitoring focuses on Data Drift—checking if the statistical distribution of features (like average age) has changed.

- Unstructured: Evaluation is more nuanced. You must monitor Embedding Drift and use complex metrics like mAP (mean Average Precision) for vision or ROUGE/BLEU scores for NLP to ensure the model isn't hallucinating or degrading.

The Hybrid Challenge: Bridging the Gap

Many modern applications are semi-structured. Take an email: it has structured headers (To, From, Date) and an unstructured body (the text).

A high-performing ML pipeline must be able to ingest both. This is where many companies struggle—managing a fragmented stack of tools that don't talk to each other.

Why Abaka AI is the Catalyst for Your ML Pipeline

At Abaka AI, we specialize in turning data chaos into model clarity. Our platform is designed to handle the heavy lifting of the unstructured data lifecycle while maintaining the precision required for structured analysis.

- Unified Data Ingestion: Connect your SQL databases and your S3 buckets into a single workflow.

- AI-Assisted Labeling: Reduce annotation time by 60% using our pre-labeling algorithms for text and vision.

- Embedding Management: Visualize your unstructured data clusters to identify edge cases and outliers before they hit production.

- Seamless Integration: Export your high-quality datasets directly into your training environment with one click.

The gap between structured and unstructured data is narrowing as AI becomes more sophisticated. The winners will be the organizations that can build a unified pipeline capable of extracting value from both.

Ready to optimize your ML Pipeline? Book a Demo with Abaka AI.

FAQ: Structured vs. Unstructured Data in Machine Learning

Q: What is the main difference between structured and unstructured data in ML?

A: The primary difference is the schema. Structured data follows a rigid, tabular format (SQL) suitable for tree-based models, while unstructured data (text, images, video) lacks a predefined model, accounting for 90% of enterprise data and requiring Deep Learning for processing.

Q: How does the preprocessing of unstructured data differ from structured data?

A: Structured data relies on manual feature engineering (e.g., column transformations). In contrast, unstructured data uses representation learning to create vector embeddings, converting raw content into high-dimensional numerical values that capture semantic meaning.

Q: Why is human-in-the-loop (HITL) annotation critical for unstructured datasets?

A: Unlike structured data, where labels are often implicit (e.g., transaction records), unstructured data requires explicit interpretation. Humans must provide context through tasks like bounding box annotation for vision or NER for text to ensure model accuracy.

Q: What are the compute requirements for structured vs. unstructured ML pipelines?

A: Structured pipelines are cost-effective and typically run on CPUs using algorithms like XGBoost. Unstructured pipelines require GPUs/TPUs to handle the massive parallel processing demands of Transformers and CNNs.

Q: How do you monitor performance in an unstructured data pipeline?

A: Beyond standard data drift, unstructured pipelines must monitor embedding drift. Evaluation uses specialized metrics such as mAP (mean Average Precision) for computer vision and ROUGE/BLEU scores for natural language processing to detect model hallucination or degradation.

What's your data

bottleneck this quarter?

Missing data

We collect it.

Messy data

We label it.

No time

We have itOff-The-Shelf.

Pick the closest fit, we'll take the call from there.

What's your data

bottleneck this quarter?

Missing data

We collect it.

Messy data

We label it.

No time

We have it Off-The-Shelf.

Pick the closest fit, we'll take the call from there.