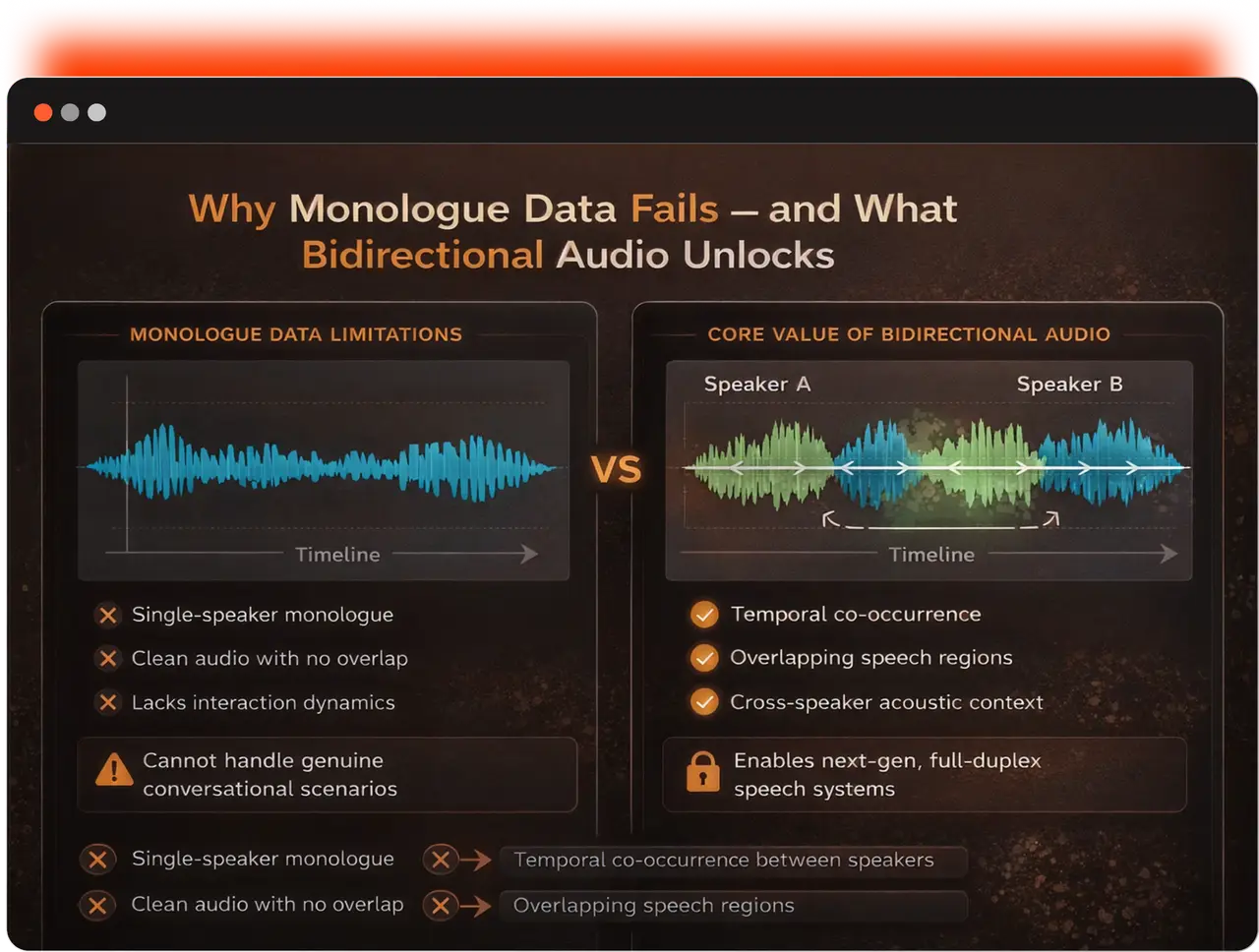

Speech models fail in real conversations because they are trained on monologue data. Bidirectional audio datasets capture overlap, turn-taking, and interaction dynamics enabling models to understand and operate in real dialogue scenarios.

Bidirectional Speech Dataset | Conversational AI

20,000+ Hours of Bidirectional Speech, Train Models That Handle Real Talk

Training speech models that truly understand conversations requires more than monologues, it requires data that fundamentally captures the structure of dialogue. Abaka AI has built a bidirectional conversational speech dataset covering Chinese and six major Eurasian languages, recorded entirely through real human-to-human interactions, with source-level dual-channel isolation and tens of thousands of hours of audio.

「Key Dataset Metrics」

Metric | Details |

Total Duration | 20,000+ hours |

Chinese Data Volume | Approximately 8,000–10,000 hours (available for purchase) |

Eurasian Languages | Covers 6 languages, ~1,000 hours per language |

Collection Method | 100% real human-to-human recordings |

Channel Setup | Dual-channel, source-level physical isolation |

1. Why Monologue Data Fails for Conversational Speech Models

Most widely used datasets for training and evaluating large-scale speech models — such as LibriSpeech, GigaSpeech, WenetSpeech, and AISHELL — share an implicit assumption: speech is produced as a monologue by a single speaker. This assumption is a reasonable engineering simplification for traditional read-style ASR systems. However, when models are required to handle real, dynamic conversational scenarios, this simplification fundamentally limits what they can learn.

In real conversations, interaction happens simultaneously. While Speaker A is talking, Speaker B is often generating real-time feedback. Speakers overlap, interrupt each other, and may inject new information before the other finishes speaking. Their speech rate, tone, and lexical choices continuously adapt based on what the other person just said.

These interaction dynamics are structural components of dialogue. They cannot be derived from monologue data, nor can they be faithfully simulated through simple data augmentation or synthetic generation.

- temporal co-occurrence between speakers

- overlapping speech regions

- cross-speaker acoustic context

These signals are essential for training next-generation full-duplex speech systems.

2. Dual-Channel Recording vs Source Separation: What’s the Difference?

.webp)

2.1 Source Separation Data (Scalable Approach)

This type of data is derived from single-channel mixed recordings, which are separated into individual tracks using blind source separation (BSS) or neural separation models. While this approach is scalable — and Abaka AI also maintains datasets in this category — it has inherent limitations:

- Overlapping regions are artificially reconstructed

- “Ghosting” artifacts and signal leakage are common

- Speaker boundaries are estimated rather than ground truth

This type of data is more suitable for large-scale ASR pretraining.

2.2 Source-Level Dual-Channel Recording (High Quality Approach)

This is Abaka AI’s primary “bidirectional audio” solution. Two speakers are recorded simultaneously using physically isolated channels (left/right), ensuring that signals are never mixed at the source.

The key difference between the two approaches lies in how overlapping speech is handled. Separation algorithms must compromise when two speakers talk simultaneously, often resulting in discontinuities or competition artifacts.

For teams building full-duplex voice assistants or real-time multi-speaker transcription systems, models must learn to:

- handle output while another speaker is talking

- detect precise turn-taking boundaries

- process genuine overlapping speech

These capabilities can only be learned from data that preserves real overlap — making bidirectional audio fundamentally irreplaceable.

3. How High-Quality Conversational Speech Data Is Collected

All Abaka AI conversational data is recorded through real human-to-human interactions under controlled conditions, using dual-channel capture with physical isolation at the source. No post-processing separation is applied.

Conversation topics and speaker turns are entirely natural, resulting in authentic conversational phenomena such as interruptions, repair sequences, backchannels, and overlap.

Real Data Sample (French Subset)

(Note: ensure this section uses actual French transcript)

- File ID: A0009_S0014

- Language: French

- Scenario: Everyday natural conversation

「Transcript Sample」

Time Interval (s) | Channel / Speaker | Transcript |

[2.310, 7.820] | L (G5112, Female, France) | Tu sais quoi ? J’ai finalement décidé de changer de travail. Je n’en peux plus. |

[7.950, 9.110] | R (G5084, Male, France) | Ah vraiment ? |

[9.040, 15.380] | L (G5112, Female, France) | Oui… Le manager ne comprend pas du tout ce qu’on fait. À chaque réunion— |

[14.720, 16.050] | R (G5084, Male, France) | [Overlap] Oui, oui, je vois. |

[15.190, 21.640] | L (G5112, Female, France) | [Overlap] —il remet tout en question, même des décisions prises le mois dernier. |

[20.880, 22.310] | R (G5084, Male, France) | [Overlap] Mais— |

[21.950, 26.700] | L (G5112, Female, France) | [Overlap] Et la semaine dernière, j’ai reçu une autre offre. Meilleur salaire, équipe plus petite. |

[27.010, 28.490] | R (G5084, Male, France) | Donc tu vas accepter ? |

[28.600, 29.350] | L (G0000, N/A) | [SOUNDING] |

[29.440, 33.910] | L (G5112, Female, France) | Je ne suis pas encore sûre. J’hésite… Tu vois, ce n’est pas si simple. |

[34.200, 35.080] | R (G0000, N/A) | [*] |

[35.190, 40.620] | R (G5084, Male, France) | Écoute, à ta place, je signerais tout de suite. La loyauté a ses limites, tu sais. |

(Note: Between 14.7s–22.3s, Speaker R frequently inserts backchannel feedback while Speaker L continues speaking, forming a typical overlap and secondary entry pattern.)

4. Fine-Grained Annotation System

To maximize data utility, Abaka AI provides a rigorous and highly granular annotation framework:

- Timestamp precision: up to 10ms, accurately marking overlap regions between L/R channels

- Channel separation: left/right channels strictly correspond to different speakers

- Speaker ID: supports speaker-level filtering and consistency checks

- Gender & language tags: labeled per segment; multilingual conversations are tagged at segment level

- Transcription standard: preserves disfluencies (false starts, fillers, hesitations)

- silence:

[*] - non-verbal sounds:

[SOUNDING]

- silence:

5. Multilingual Conversational Speech Dataset Across 7 Languages

Abaka AI's bidirectional audio dataset currently covers Chinese and six major Eurasian languages, with a total duration exceeding 20,000 hours.

「Language Coverage」

Language | Data Volume (Hours) | Scenario Type |

Chinese (Mandarin) | 8,000–10,000 | Natural conversation |

French | ~1,000 | Natural conversation |

German | ~1,000 | Natural conversation |

Korean | ~1,000 | Natural conversation |

Portuguese | ~1,000 | Natural conversation |

Japanese | ~1,000 | Natural conversation |

Italian | ~1,000 | Natural conversation |

6. What Can Bidirectional Audio Data Be Used For?

High-quality bidirectional audio significantly improves performance across a range of speech AI scenarios:

「Training Scenarios and the Value of Bidirectional Audio Data」

Training Scenario | Limitations of Monologue Data | Core Value of Bidirectional Data |

Full-duplex voice assistants | Cannot learn when to speak or when to wait | Provides real turn-taking and overlap signals, enabling models to learn interaction timing |

Real-time speech transcription (meetings/calls) | Lacks cross-speaker acoustic context | Dual-channel independent signals preserve full speaker response dynamics |

Multilingual speaker diarization | Language switching is easily mistaken for speaker switching | Segment-level language tagging combined with speaker ID enables precise separation |

Conversational ASR | Highly sensitive to overlapping speech interference | Significantly reduces WER (Word Error Rate) in overlapping conditions |

Read-style speech synthesis | Lacks realism | Learns natural disfluencies, interruptions, and repair sequences |

Speech translation / simultaneous interpretation | Lacks natural speech rhythm and cross-lingual context alignment | Provides turn-level alignment in bilingual conversations while preserving prosodic features |

Conclusion

The next frontier of speech AI is no longer just recognizing speech — it is truly understanding conversation. This means understanding the complex interactions that occur when two people speak simultaneously.

Achieving this requires getting the data right:

- real human recordings

- source-level dual-channel isolation

- full preservation of conversational structure

This is the core philosophy behind Abaka AI’s bidirectional audio technology.

Get Access

We provide evaluation sample packs for target languages, enabling full technical validation before procurement. For domain-specific filtering or custom dataset requests, feel free to contact us for further discussion.

FAQs

What is bidirectional audio data? Bidirectional audio data consists of dual-channel recordings of real human conversations, where each speaker is captured on a separate channel. This preserves overlapping speech, interruptions, backchannels, and the natural interaction signals that monologue data simply cannot replicate.

How is bidirectional audio different from standard speech datasets? Standard datasets typically use single-speaker recordings or mixed audio where speakers are blended into one channel. Bidirectional datasets keep each speaker isolated, retaining turn-taking dynamics, response latency, and real conversational flow — the raw material full-duplex models actually need.

What are bidirectional audio datasets used for? They are used to train full-duplex voice assistants, conversational AI systems, multi-speaker ASR, real-time transcription, speaker diarization, and dialogue-aware language models. Any system that needs to operate in live conversation — not just transcribe it — benefits from bidirectional training data.

Why does channel separation matter for model training? When speakers share a single channel, the model learns to handle audio as a stream, not a dialogue. Separate channels let the model learn who speaks when, how speakers respond to each other, and how to handle simultaneous speech — critical for low-latency, natural-sounding voice AI.

What languages does Abaka AI's bidirectional dataset cover? Our dataset spans 7 languages with consistent dual-channel quality across all languages. This makes it particularly valuable for multilingual conversational AI development.

How is the data collected and quality-controlled? All recordings are sourced from real human-to-human conversations across diverse topics and speaking styles. Each session undergoes channel alignment verification, noise filtering, and annotation review to ensure the interaction dynamics are intact and model-ready.